This post has been moved to https://www.striim.com/tutorial/streaming-data-integration-tutorial-adding-a-kafka-stream-to-a-real-time-data-pipeline/

Month: December 2020

Implementing Streaming Cloud ETL with Reliability

If you’re considering adopting a cloud-based data warehousing and analytics solution the most important consideration is how to populate it with current on-site and cloud data in real time with cloud etl.

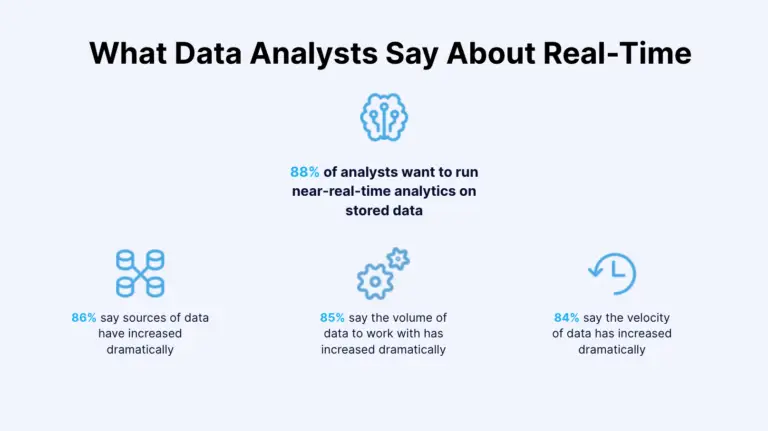

As noted in a recent study by IBM and Forrester, 88% of companies want to run near-real-time analytics on stored data. Streaming Cloud ETL enables real-time analytics by loading data from operational systems from private and public clouds to your analytics cloud of choice.

A streaming cloud ETL solution enables you to continuously load data from your in-house or cloud-based mission-critical operational systems to your cloud-based analytical systems in a timely and dependable manner. Reliable and continuous data flow is essential to ensuring that you can trust the data you use to make important operational decisions.

In addition to high-performance and scalability to handle your large data volumes, you need to look for data reliability and pipeline high availability. What should you look for in a streaming cloud ETL solution to be confident that it offers the high degree of reliability and availability your mission-critical applications demand?

For data reliability, the following two common issues with data pipelines should be avoided:

- Data loss. When data volumes increase and the pipelines are backed up, some solutions allow some of the data to be discarded. Also, when the CDC solution has limited data type support, it may not be able to capture the columns that contain that data type. If an outage or process interruption occurs, an incorrect recovery point can also lead to skipping some data.

- Duplicate data. After recovering from a process interruption, the system may create duplicate data. This issue becomes even more prevalent when processing the data with time windows after the CDC step.

How Striim Ensures Reliable and Highly Available Streaming Cloud ETL

Striim ingests, moves, processes, and delivers real-time data across heterogeneous and high-volume data environments. The software platform is built ground-up specifically to ensure reliability for streaming cloud ETL solutions with the following architectural capabilities.

Fault-tolerant architecture

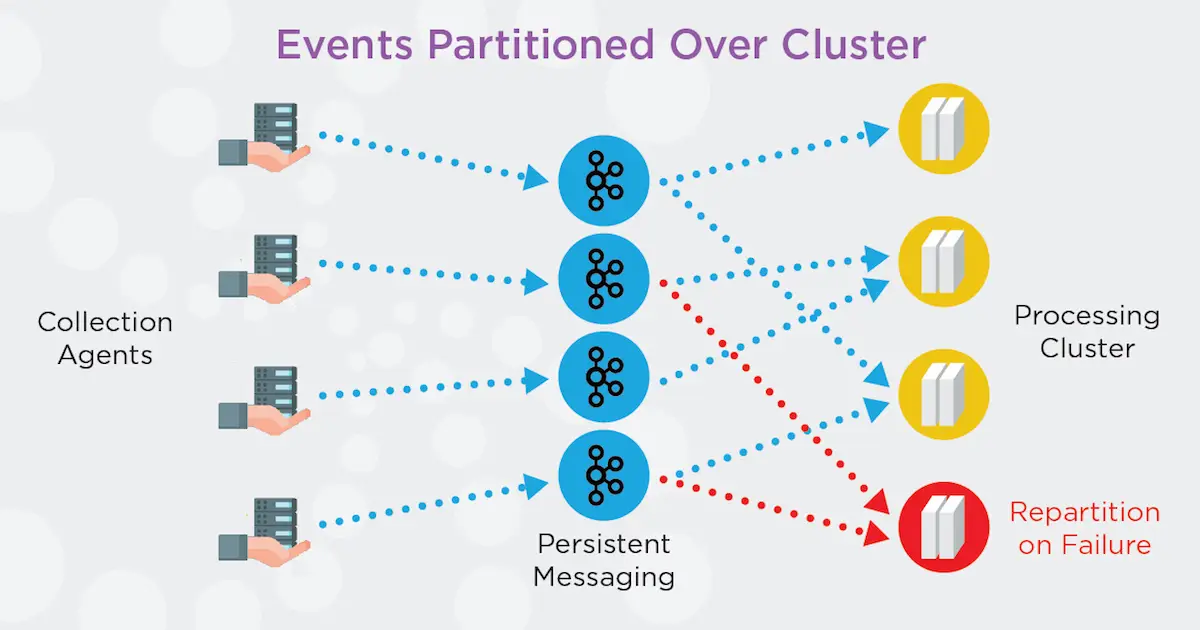

Striim is designed with a built-in clustered environment with a distributed architecture to provide immediate failover. The metadata and clustering service watches for node failure, application failure, and failure of certain other services. If one node goes down, another node within the cluster immediately takes over without the need for users to do this manually or perform complex configurations.

Exactly Once Processing for zero data loss or duplicates

Exactly Once Processing for zero data loss or duplicates

Striim’s advanced checkpointing capabilities ensure that no events are missed or processed twice while taking time window contents into account. It has been tested and certified for cloud solutions to offer real-time streaming to Microsoft Azure, AWS, and/or Google Cloud with event delivery guarantees.

During data ingest, checkpointing keeps track of all events the system processes and how far they got down various data pipelines. If something fails, Striim knows the last known good state and what position it needs to recover from. Advanced checkpointing is designed to eliminate loss in data windows when the system fails.

If you have a defined data window (say 5 minutes) and the system goes down, you cannot typically restart from where you left off because you will have lost the 5 minutes’ worth of data that was in the data window. That means your source and target will no longer be completely synchronized. Striim addresses this issue by coordinating with the data replay feature that many data sources provide to rewind sources to just the right spot if a failure occurs.

In cases where data sources don’t support data replay—for example, data coming from sensors—Striim’s persistent messaging stores and checkpoints data as it is ingested. Persistent messaging allows previously non-replayable sources to replayed from a specific point. It also allows multiple flows from the same data source to maintain their own checkpoints. To offer exactly once processing, Striim checks to make sure input data has actually been written. As a result, the platform can checkpoint, confident in the knowledge that the data made it to the persistent queue.

End-to-end data flow management for simplified solution architecture and recovery

Striim’s streaming cloud ETL solution also delivers streamlined, end-to-end data integration between source and target systems that enhances reliability. The solution ingests data from the source in real time, performs all transformations such as masking, encryption, aggregation, and enrichment in memory as the data goes through the stream and then delivers the data to the target in a single network operation. All of these operations occur in one step without using a disk and deliver the streaming data to the target in sub-seconds. Because Striim does not require additional products, this simplified solution architecture enables a seamless recovery process and minimizes the risk of data loss or inaccurate processing.

In contrast, a data replication service without built-in stream processing requires data transformation to be performed in the target (or source), with an additional product and network hop. This minimum two-hop process introduces unnecessary data latency. It also complicates the solution architecture, exposing the customer to considerable recovery-related risks and requiring a great deal of effort for accurate data reconciliation after an outage.

For use cases where transactional integrity matters, such as migrating to a cloud database or continuously loading transactional data for a cloud-based business system, Striim also maintains ACID properties (atomicity, consistency, isolation, and durability) of database operations to preserve the transactional context.

The best way to choose a reliable streaming cloud ETL solution is to see it in action. Click here to request a customized demo for your specific environment.

Top Data Integration Use Cases For The Year Ahead

Over 80% of companies are set to use multiple cloud vendors for their data and analytics needs by 2025. Real-time data integration platforms are vital for making these plans a reality. They connect different cloud and on-premise sources and help move data around in real-time.

But the potential of this technology extends beyond cloud integration. Near instantaneous data transfer helps companies detect anomalies, make predictions, drive sales, apply machine learning (ML) models, and more. It provides a much-needed competitive edge.

As we wrap up what was an eventful year (to say the least), let’s take a look at some of the most popular data integration use cases in 2020 while looking ahead to new trends in cloud data platforms.

Moving on-premise data to the cloud

Moving data from legacy databases to the cloud in real-time reduces downtime, prevents business interruptions, and keeps databases synced.

A software process called Change Data Capture (CDC) is vital for reducing downtime. CDC allows real-time data integration (DI) to track and capture changes in the legacy system and then apply them to the cloud once the migration ends. CDC works later on as well, continuously syncing two databases. This technology allows companies to move data to the cloud without locking the legacy database.

Data can also be moved bidirectionally. Some users can be kept in the cloud and some in the legacy database. Data can then be gradually migrated to reduce risk, in case you’re dealing with mission-critical systems and can’t afford any business interruptions.

Transferring data to the cloud in real-time enables companies to offer innovative services. Courier businesses, for instance, may use real-time DI to move data from on-premise Oracle databases to Google BigQuery and run real-time analytics and reporting. They’re then able to provide customers with live shipment tracking.

Enabling real-time data warehousing in the cloud

Many companies are also turning to cloud data warehouses. This storage option is growing in popularity as it allows users to reduce the cost of ownership, improve speed, secure data, improve integration, and leverage the cloud.

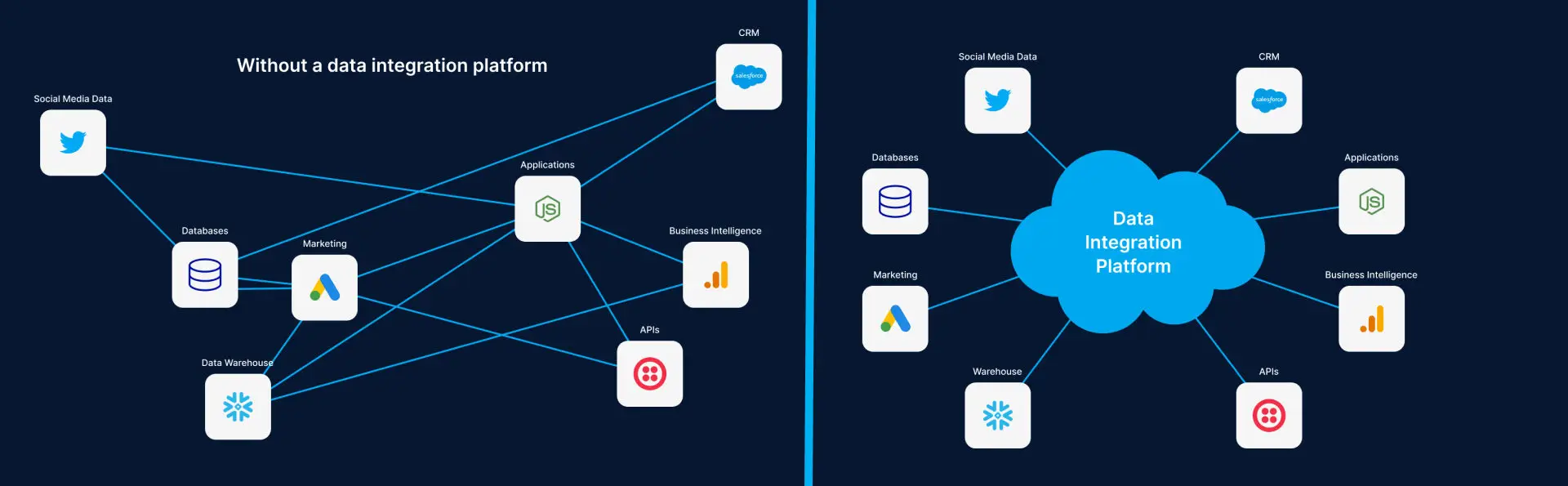

But real-time analysis of data in cloud warehouses requires real-time integration platforms. They collect data from various on-prem and cloud-based sources – such as transactional databases, logs, IoT sensors – and move it to cloud warehouses.

These real-time integration platforms rely on CDC to ingest data from multiple sources without causing any modification or disruption to data production systems.

Data is then delivered to cloud warehouses with sub-second latency and in a consumable form. It’s processed in-flight using techniques such as denormalization, filtering, enrichment, and masking. In-flight data processing has multiple benefits including minimized ETL workload, reduced architecture complexity, and improved compliance with privacy regulations.

DI platforms also respect the ordering and transactionality of changes applied to cloud warehouses. And streaming integration also makes it possible to synchronize cloud data warehouses with on-premises relational databases. As a result, data can be moved to the cloud in a phased migration without disrupting the legacy environment.

Other businesses may prefer data lakes. This storage option doesn’t necessarily require data to be formatted or transformed because it can be stored in its raw state.

Adopting a multi-cloud strategy with cloud integration

Furthermore, real-time data integration allow you to be agile. You get to connect data, infrastructure, and applications in multiple cloud environments.

You can then avoid vendor lock-in and combine the cloud solutions that fit your needs.

For instance, you can have your applications write data to a data warehouse like Amazon Redshift. Meanwhile, the same records can also be inserted into another cloud vendor’s low-cost storage solution, such as Google Cloud Storage (GCS). If you later want to migrate from Redshift to BigQuery, your data will be ready in GCS for a low-friction migration.

Powering real-time applications and operations

Data integration enables companies to run real-time applications (RTA), whether these apps use on-premise or cloud databases. Real-time integration solutions move data with sub-second latency, and users perceive the functioning of RTAs as immediate and current.

Data integration can also support RTAs by transforming and cleaning data or running analytics. And applications from a wide range of fields — videoconferencing, online games, VoIP, instant messaging, ecommerce — can benefit from real-time integration.

Macy’s, for instance, makes great use of data integration platforms to scale their operations in the cloud. The giant US retailer is running real-time data pipelines in hybrid cloud environments for both operational and analytics needs. Its cloud and business apps need real-time visibility into orders and inventory. Otherwise, the company might have to deal with out-of-stock or inventory surpluses. It’s vital to avoid this scenario, especially during peak shopping periods, such as Black Friday and Cyber Monday, when Macy’s processing as much as 7,500 transactions per second.

Furthermore, real-time data pipelines are important for cloud-first apps, too. Designed specifically for cloud environments, these real-time apps can outperform on-premise competitors but require continuous data processing.

Also, real-time DI products can enhance operational reporting. Companies would receive up-to-date data from different sources and could detect immediate operational needs. Whether it’s about monitoring financial transactions, production chains, or store inventories, operational reporting adds value only if it’s delivered fast.

Detecting anomalies and making predictions

A real-time data pipeline allows companies to collect data and run various types of analytics, including anomaly detection and prediction. These two types of analytics are critical for making timely decisions. And they can be of help in many different ways.

Real-time data integration platforms, for instance, help companies manipulate IoT data produced by a range of sensor sources. Once cleaned and collected in a unified environment, this IoT data can be analyzed. The system may detect anomalies, such as high temperatures or rising pressure, and instruct a manager to act and prevent damage. Or, the data may reveal failing industrial robots that need replacement. Integration technologies also allow you to combine IoT sensor data with other data sources for better insights. Legacy technologies are rarely up to this challenge.

Besides factories and robots, sensors also monitor planes, cars, and trucks. Analyzing vehicle data can reveal if an engine is likely to fail soon if certain parts aren’t replaced. But this benefit can only be realized if various types of data are collected and analyzed in real-time. Otherwise, companies wouldn’t be able to fix engines on time. Data integration is thus vital for predictive maintenance.

Anomaly detection capabilities are especially useful in the cybersecurity field. Real-time collection and analysis of logs, IP addresses, sessions, and other pieces of information enable teams to detect and prevent suspicious transactions or credit card fraud.

Real-time analytics can also make the difference between scoring a sale or losing a customer. Up-to-the-minute suggestions based on customer emotions can push online visitors to buy products instead of going away. Companies can bring together data from multiple sources to help the system make the most relevant prediction.

Supporting machine learning solutions

Real-time DI platforms can help teams run ML models more effectively.

DI programs can save you the time you’d spend on cleaning, enriching, and labeling data. They deliver prepared data that can be pushed into algorithms.

Also, real-time architecture ensures ML models are fed with up-to-date data from various sources instead of obsolete data, as was historically the case. These real-time data streams can be used to train ML models and prepare for their deployment. Companies can develop an algorithm to spot a specific type of malicious behavior by correlating data from multiple sources.

Or, you could pass the streams through already trained algorithms and get real-time results. ML programs would be processing cleansed data from real-time pipelines and raising an alarm or executing an action once a predefined event is detected. These insights can then guide further decision-making.

Syncing records to multiple systems

Near-instantaneous data integration enables companies to sync records across multiple systems and ensure all departments always have access to up-to-date information. There are many situations in which this ability can make a difference.

Take, for example, two beverage producers that recently merged. They’ll likely have many retail customers and chemical suppliers in common but keep information about them in different databases. Some details, such as phone numbers or product prices, may not even agree. But now that those two producers are a single company, they need to find a way to merge or sync data. Integration platforms can take data from multiple repositories and update records in both companies.

Or, different departments in the same company might use siloed systems. The finance team’s system may not be linked with the receiving team’s system, which means that data updates won’t be visible to everyone. Real-time DI can link these systems and ensure data is synced.

Creating a sales and marketing performance dashboard

Companies can also use integration technologies to improve sales and marketing performance. This is done by using real-time DI products to integrate data points from internal and external sources into a unified environment. As Kelsey Fecho, growth lead at Avo, says, “If you have data in multiple platforms – point and click behavioral analytics tools, marketing tools, raw databases – the data integration tools will help you unify your data structures and control what data goes where from a user-friendly UI.”

Companies can then track sales, open rates, conversion metrics, and various other KPIs in a single dashboard. Data is visualized using charts and graphs, making it easier to spot trends in real-time and have a better sense of ROI.

And the rise of online sales and advertising makes this capability ever more relevant. Businesses now have vast amounts of data on sales and marketing activities and look for ways to extract more value.

Creating a 360-degree view of a customer

Real-time data integration platforms enable businesses to build other types of dashboards, such as a 360-degree view of a customer.

In this case, customer data is pulled from multiple systems, such as CRM, ERP, or support, into a single environment. Details on past calls, emails, purchases, chat sessions, and various other activities are added as well. And integration tech can further enrich the dashboard with external data taken from social media or data brokers.

Companies can apply predictive analytics to this wealth of data. The system could then make a personalized product recommendation or provide tips to agents dealing with demanding customers. And agents will also get to save some time. They no longer have to put customers on hold to collect information from other departments when solving an inquiry. All details are readily available. Customers will be more satisfied, too, as their problems are solved promptly.

Data integration platforms help you scale faster

The world is becoming increasingly data-driven. Realizing value from this trend starts with bringing data from disparate sources together and making it work for you. In that regard, real-time DI platforms are a game-changer. From moving data to running analytics to optimizing sales, they enable you to step up your data game and take on the competition. And to achieve these benefits, it’s vital to choose cutting-edge integration solutions that can rise to this challenge.

Cloud Migration Checklist For Google Cloud Platform

Download the cloud database migration checklist for Google Cloud Platform now to ease your cloud adoption.

Cloud Migration Checklist For Microsoft Azure

Download the cloud database migration checklist for Microsoft Azure now to ease your cloud adoption.