Data leaders today are inundated with decisions to make. Decisions around how to build a thriving data team, how to approach data strategy, and of course, which technologies and solutions to choose. With so many options available, the choice can be daunting.

That’s why this guide exists. In this article, we explore the leading platforms that help organizations capture, process, and analyze data in real time. You’ll learn how these solutions address critical needs like real-time analytics, cloud migration, event-driven architectures, and operational intelligence.

We’ll explore the following platforms:

- Striim

- Apache Kafka

- Oracle GoldenGate

- Cloudera

- Confluent

- Estuary Flow

- Azure Stream Analytics

- Redpanda

Before we dive into each tool, let’s cover a few basic concepts.

What Are Data Streaming Platforms?

Data streaming platforms are software systems that ingest, process, and analyze continuous data flows in real time or near real time, typically within milliseconds. These platforms are foundational to event-driven architectures, driving high-throughput data pipelines across diverse data sources, from IoT devices to microservices and apps.

Unlike batch processing systems, streaming platforms provide fault-tolerant, scalable infrastructure for stream processing, enabling real-time analytics, machine learning workflows, and instant data integration across cloud-native environments such as AWS and Google Cloud, while supporting various data formats via connectors and APIs.

These are powerful tools that can deliver impact for modern enterprises in more ways than one.

Benefits of Data Streaming Platforms

At their core, data streaming platforms transform data latency from a constraint into a competitive advantage.

- Accelerated Decision-Making: Streaming platforms enable real-time data processing and analytics that detect opportunities and trends as they emerge, reducing response time from hours to milliseconds while optimizing customer experiences through instant personalization.

- Operational Excellence through Automation: Streaming tools streamline data infrastructure by eliminating complex batch processing workflows, reducing downtime through high availability architectures, and enabling automated data quality monitoring across large volumes from various sources.

- Innovation Catalyst: They help to form the ecosystem for building streaming applications from real-time dashboards and event-streaming use cases in healthcare to serverless, low-latency solutions that unlock new revenue streams.

- Cost-Effective Scalability: Streaming platforms deliver high-performance data processing through managed services and open-source options that scale with data volumes, eliminating expensive data warehouses while maintaining fault tolerance and optimization capabilities.

How to Choose a Data Streaming Platform

When evaluating data streaming platforms, it’s worth looking beyond basic connectivity to consider tools that ensure continuous operations, enable immediate business value, and scale with enterprise demands.

The following criteria can help pick out solutions that deliver true real-time intelligence:

- Real-Time Processing vs. Batch Processing Delays: Assess whether the platforms provide genuine real-time data streaming with in-memory processing, or rely on batch processing intervals, introducing latency. True real-time analytics enable immediate fraud detection, customer experiences, and operational decisions within milliseconds.

- High Availability and Fault-Tolerant Architecture: Evaluate solutions offering multi-node, active-active clustering with automatic failover capabilities. This ensures zero downtime during node failures or cloud outages, preventing data corruption and maintaining business continuity at scale.

- Depth of In-Stream Transformation Capabilities: Look for platforms supporting comprehensive data processing, including filtering, aggregations, enrichment, and streaming SQL without requiring third-party tools. Advanced transformation within data pipelines eliminates post-processing complexity and reduces infrastructure costs.

- Enterprise Connectivity and Modern Data Sources: Consider support for diverse data formats beyond traditional databases—including IoT sensors, APIs, event streaming sources like Apache Kafka, and cloud-native services. Seamless integration across on-premises and multi-cloud environments ensures a unified data infrastructure.

- Scalability Without Complexity: Examine whether platforms offer low-code/no-code options alongside horizontal scaling. This combination enables data engineers to build automated workflows rapidly while maintaining high throughput and performance as data volumes grow exponentially.

Top Data Streaming Platforms to Consider

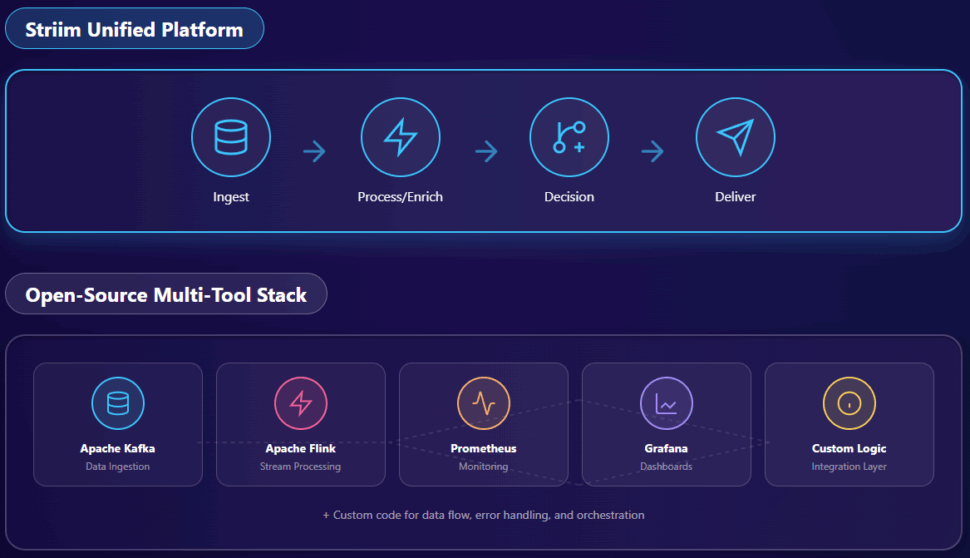

Striim

Striim is a real-time data streaming platform that continuously moves, processes, and analyzes data from various sources to multiple destinations. The platform specializes in change data capture (CDC), streaming ETL/ELT, and real-time data pipelines for enterprise environments.

Capabilities and Features

- Real-Time Data Integration: Captures and moves data from databases, log files, messaging systems, and cloud apps with sub-second latency. Supports 150+ pre-built connectors for sources and destinations.

- Change Data Capture (CDC): Captures database changes in real-time from Oracle, SQL Server, PostgreSQL, and MySQL. Enables zero-downtime migrations and continuous replication without impacting source systems.

- Streaming SQL and Analytics: Processes and transforms data in-flight using SQL-based queries and streaming analytics. Enables complex event processing, pattern matching, and real-time aggregations.

- In-Memory Processing: Delivers high-performance data processing with built-in caching and stateful stream processing. Handles millions of events per second with guaranteed delivery and exactly-once processing.

Key Use Cases

- Real-Time Data Warehousing: Continuously feeds data warehouses and data lakes with up-to-date information from operational systems. Enables near-real-time analytics without batch-processing delays.

- Operational Intelligence: Monitors business operations in real-time to detect anomalies, track KPIs, and trigger alerts. Supports fraud detection, customer experience monitoring, and supply chain optimization.

- Cloud Migration and Modernization: Migrates databases and applications from on-premises to the cloud with minimal downtime. Validates data integrity throughout migration and enables phased approaches.

- Real-Time Data Replication: Maintains synchronized copies of data across multiple systems to ensure high availability and disaster recovery. Supports active-active replication and multi-region deployments.

- IoT and Log Processing: Ingests and processes high-velocity data streams from IoT devices, sensors, and application logs. Performs real-time filtering, enrichment, and routing to appropriate destinations.

Pricing

Striim offers a free trial, followed by subscription and usage-based pricing that scales with data volume, connector mix, and deployment model (SaaS, private VPC/BYOC, or hybrid). Typical plans include platform access, core CDC/streaming features, and support SLAs, with enterprise options for advanced security, high availability, and premium support.

Who They’re Ideal For

Striim suits large enterprises and mid-market companies that require real-time data integration and streaming analytics, particularly those undergoing digital transformation or cloud migration. The platform serves companies with complex, heterogeneous environments that require continuous data movement across on-premises, cloud, and hybrid infrastructures, while maintaining sub-second latency.

Pros

- Easy Setup: The drag-and-drop interface simplifies pipeline creation and reduces learning curves. Users build data flows without extensive coding.

- Comprehensive Monitoring: Provides real-time dashboards and metrics for tracking pipeline performance. Visual tools help quickly identify and resolve issues.

- Strong Technical Support: A responsive and knowledgeable team provides hands-on assistance during implementation. Users appreciate direct access to experts who understand complex integration scenarios.

Cons

- High Cost: Enterprise pricing can be expensive for smaller organizations. Licensing scales with data volumes and connectors, quickly adding up.

- Performance at Scale: Some users experience degradation when processing very high data volumes or complex transformations. Large-scale deployments may require significant optimization.

- Connector Limitations: While offering many connectors, some lack maturity and specific features. Developing custom connectors for unsupported sources can be a complex process.

Apache Kafka

Apache Kafka is an open-source distributed event streaming platform used by thousands of companies for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications. It processes and moves large volumes of data in real-time with high throughput and low latency.

Capabilities and Features

- Core Kafka Platform: Distributed streaming system scaling to thousands of brokers, handling trillions of messages daily, storing petabytes of data. Provides permanent storage with fault-tolerant clusters and high availability across regions.

- Kafka Connect: Out-of-the-box interface integrating with hundreds of event sources and sinks, including Postgres, JMS, Elasticsearch, and AWS S3. Enables seamless data integration without custom code.

- Kafka Streams: A lightweight stream processing library for building data processing pipelines. Enables joins, aggregations, filters, and transformations with event-time and exactly-once processing.

- Schema Registry (via Confluent): Central repository with a RESTful interface for defining schemas and registering applications. Supports Avro, JSON, and Protobuf formats, ensuring data compatibility.

- Client Libraries: Support for reading, writing, and processing streams in Java, Python, Go, C/C++, and .NET. Enables developers to work with Kafka using preferred languages.

Key Use Cases

- Messaging: High-throughput message broker decoupling data producers from processors. Provides better throughput, partitioning, replication, and fault-tolerance than traditional messaging systems.

- Website Activity Tracking: Rebuilds user activity tracking as real-time publish-subscribe feeds. Enables real-time processing of page views, searches, and user actions at high volumes.

- Log Aggregation: Replaces traditional solutions by abstracting files into message streams. Provides lower-latency processing and easier multi-source support with stronger durability.

- Stream Processing: Enables multi-stage pipelines where data is consumed, transformed, enriched, and published. Common in content recommendation systems and real-time dataflow graphs.

- Event Sourcing: Supports designs where state changes are logged as time-ordered records. Kafka’s storage capacity makes it excellent for maintaining complete audit trails.

- Operational Metrics: Aggregates statistics from distributed apps, producing centralized operational data feeds. Enables real-time monitoring and alerting across large-scale systems.

Pricing

Apache Kafka (Open Source): Free under Apache License v2. Confluent Cloud/Platform versions have separate pricing tiers (Basic, Standard, Enterprise) based on throughput and storage.Who They’re Ideal For

Apache Kafka suits Fortune 100 companies and large enterprises requiring high-performance data streaming at scale, including financial services, manufacturing, insurance, telecommunications, and technology. It’s ideal for organizations processing millions to trillions of messages daily with mission-critical reliability and exactly-once processing.

Pros

- High Performance and Scalability: Delivers messages at network-limited throughput with 2ms latencies, scaling elastically for massive data volumes. Expands and contracts storage and processing as needed.

- Reliability and Durability: Provides guaranteed ordering, zero message loss, and exactly-once processing for mission-critical use cases. Fault-tolerant design ensures data safety through replication.

- Rich Ecosystem: Offers 120+ pre-built connectors and multi-language support. Large open-source community provides extensive tooling and resources.

- Proven Enterprise Adoption: Trusted by 80% of Fortune 100 companies with thousands using it in production. With over 5 million lifetime downloads, this demonstrates widespread adoption.

Cons

- Operational Complexity: Requires significant expertise to deploy, configure, and maintain production clusters. Managing partitions, replication, and broker scaling challenges teams without automation.

- Learning Curve: The distributed nature and numerous configurations create a steep learning curve for teams new to stream processing. Understanding partitions, consumer groups, and offset management takes time.

- Resource Intensive: Requires substantial infrastructure for high-throughput scenarios. Storage and compute costs escalate with retention requirements and processing needs.

Oracle GoldenGate

Oracle GoldenGate is a long-standing, comprehensive software solution designed for real-time data replication and integration across heterogeneous environments. It is widely recognized for its ability to ensure high availability, transactional change data capture (CDC), and seamless replication between operational and analytical systems.

Capabilities and Features

- Oracle GoldenGate Core: Facilitates unidirectional, bidirectional, and multi-directional replication to support real-time data warehousing and load balancing across both relational and non-relational databases.

- Oracle Cloud Infrastructure (OCI) GoldenGate: A fully managed cloud service that automates data movement in real-time at scale, removing the need for manual compute environment management.

- GoldenGate Microservices Architecture: Provides modern management tools, including a web interface, REST APIs, and a command-line interface (Admin Client) for flexible deployment across distributed architectures.

- Data Filtering and Transformation: Enhances performance by replicating only relevant data subsets. It supports schema adaptation and data enrichment (calculated fields) in flight.

- GoldenGate Veridata: A companion tool that compares source and target datasets to identify discrepancies without interrupting ongoing transactions.

Key Use Cases

- Zero Downtime Migration: Critical for moving databases and platforms without service interruption, including specialized paths for migrating MongoDB to Oracle.

- High Availability (HA) and Disaster Recovery (DR): Keeps synchronized data copies across varying systems to ensure business continuity and operational resilience.

- Real-Time Data Integration: Captures transactional changes instantly, enabling live reporting and analytics on fresh operational data.

- Multi-System Data Distribution: Bridges legacy systems and modern platforms, handling different schemas and data types through advanced mapping.

- Compliance and Data Security: Filters sensitive data during replication to meet regulatory standards (e.g., GDPR, HIPAA) before it reaches target environments.

Pricing

GoldenGate uses a licensing model for self-managed environments and a metered model for its managed service on Oracle Cloud Infrastructure (OCI). Costs depend heavily on deployment type (on-prem vs. cloud), core counts, and optional features like Veridata. Enterprises typically require a custom quote from Oracle or a partner to determine exact licensing needs.

Who They’re Ideal For

Oracle GoldenGate is the go-to choice for large enterprises with complex, heterogeneous IT environments—particularly those heavily invested in the Oracle ecosystem. It is ideal for organizations where high availability, disaster recovery, and zero-downtime migration are non-negotiable requirements.

Pros

- Broad Platform Support: Compatible with a wide range of databases, including Oracle, SQL Server, MySQL, and PostgreSQL.

- Low Impact: Its log-based capture method ensures minimal performance overhead on source production systems.

- Flexible Topology: Supports complex configurations, including one-to-many, many-to-one, and cascading replication.

Cons

- High Cost: Licensing can be significantly more expensive than other market alternatives, especially for enterprise-wide deployment.

- Complexity: Requires specialized knowledge to implement and manage, often leading to a steep learning curve for new administrators.

- Resource Intensive: High-volume replication can demand substantial system resources, potentially requiring infrastructure upgrades.

Cloudera

Cloudera is a hybrid data platform designed to manage, process, and analyze data across on-premises, edge, and public cloud environments. Moving beyond its Hadoop roots, modern Cloudera offers unified data management with enterprise-grade security and governance for large-scale operations.

Capabilities and Features

- Cloudera Streaming: A real-time analytics platform powered by Apache Kafka for ingestion and buffering, complete with monitoring via Streams Messaging Manager.

- Cloudera Data Flow: A comprehensive management layer for collecting and moving data from any source to any destination, featuring no-code ingestion for edge-to-cloud workflows.

- Streams Replication Manager: Facilitates cross-cluster Kafka data replication, essential for disaster recovery and data availability in hybrid setups.

- Schema Registry: Provides centralized governance and metadata management to ensure consistency and compatibility across streaming applications.

Key Use Cases

- Hybrid Cloud Streaming: Extends on-premises data capabilities to the cloud, allowing for seamless collection and processing across disparate environments.

- Real-Time Data Marts: Supports high-volume, fast-arriving data streams that need to be immediately available for time-series applications and analytics.

- Edge-to-Cloud Data Movement: Captures IoT and sensor data at the edge and moves it securely to cloud storage or processing engines.

Pricing

Cloudera operates on a “Cloudera Compute Unit” (CCU) model for its cloud services. Different services (Data Engineering, Data Warehouse, Operational DB) have different per-CCU costs ranging roughly from $0.04 to $0.30 per CCU. On-premises deployments generally require custom sales quotes.

Who They’re Ideal For

Cloudera is best suited for large, regulated enterprises managing petabyte-scale data across hybrid environments. It fits organizations that need strict data governance and security controls while processing both batch and real-time streaming workloads.

Pros

- Unified Platform: Offers an all-in-one suite for ingestion, processing, warehousing, and machine learning.

- Hybrid Capability: Strong support for organizations that cannot move entirely to the public cloud and need robust on-prem tools.

- Security & Governance: Built with enterprise compliance in mind, offering unified access controls and encryption.

Cons

- Steep Learning Curve: The ecosystem is vast and complex, often requiring significant training and expertise to manage effectively.

- High TCO: Between licensing, infrastructure, and the personnel required to manage it, the total cost of ownership can be high.

- Heavy Infrastructure: Requires significant hardware resources to run efficiently, especially for on-prem deployments.

Confluent

Confluent is the enterprise distribution of Apache Kafka, founded by the original creators of Kafka. It transforms Kafka from a raw open-source project into a complete, enterprise-grade streaming platform available as a fully managed cloud service or self-managed software.

Capabilities and Features

- Confluent Cloud: A fully managed, cloud-native service available on AWS, Azure, and Google Cloud. It features serverless clusters that autoscale based on demand.

- Confluent Platform: A self-managed distribution for on-premises or private cloud use, adding features like automated partition rebalancing and tiered storage.

- Pre-built Connectors: Access to 120+ enterprise-grade connectors (including CDC for databases and legacy mainframes) to speed up integration.

- Stream Processing (Flink): Integrated support for Apache Flink allows for real-time data transformation and enrichment with low latency.

- Schema Registry: A centralized hub for managing data schemas (Avro, JSON, Protobuf) to prevent pipeline breakage due to format changes.

Key Use Cases

- Event-Driven Microservices: Acts as the central nervous system for microservices, decoupling applications while ensuring reliable communication.

- Real-Time CDC: Captures and streams changes from databases like PostgreSQL and Oracle for immediate use in analytics and apps.

- Legacy Modernization: Bridges the gap between legacy mainframes/databases and modern cloud applications.

- Context-Rich AI: Feeds real-time data streams into AI/ML models to ensure inference is based on the absolute latest data.

Pricing

Confluent Cloud offers three tiers:

- Basic: Pay-as-you-go with no base cost (just throughput/storage).

- Standard: An hourly base rate plus throughput/storage costs.

- Enterprise: Custom pricing for mission-critical workloads with enhanced security and SLAs.

Note: Costs can scale quickly with high data ingress/egress and long retention periods.

Who They’re Ideal For

Confluent is the default choice for digital-native companies and enterprises that want the power of Kafka without the headache of managing it. It is ideal for financial services, retail, and tech companies building mission-critical, event-driven applications.

Pros

- Kafka Expertise: As the commercial entity behind Kafka, they offer unmatched expertise and ecosystem support.

- Fully Managed: Confluent Cloud removes the significant operational burden of managing Zookeeper and brokers.

- Rich Ecosystem: The vast library of connectors and the Schema Registry significantly reduce development time.

Cons

- Cost at Scale: Usage-based billing can become expensive for high-throughput or long-retention use cases.

- Vendor Lock-in: Relying on Confluent-specific features (like their specific governance tools or managed connectors) can make it harder to migrate back to open-source Kafka later.

- Egress Fees: Moving data across different clouds or regions can incur significant networking costs.

Estuary Flow

Estuary Flow is a newer entrant focusing on unifying CDC and stream processing into a single, developer-friendly managed service. It aims to replace fragmented stacks (like Kafka + Debezium + Flink) with one cohesive tool offering predictable pricing.

Capabilities and Features

- Real-Time CDC: Specialized in capturing database changes with millisecond latency and minimal source impact.

- Unified Processing: Combines streaming and batch paradigms, allowing you to handle historical backfills and real-time streams in the same pipeline.

- Dekaf (Kafka API): A compatibility layer that allows Flow to look and act like Kafka to existing tools, without the user managing clusters.

- Built-in Transformations: Supports SQL and TypeScript for in-flight data reshaping.

Key Use Cases

- Real-Time ETL/ELT: Automates the movement of data from operational DBs to warehouses like Snowflake or BigQuery with automatic schema evolution.

- Search & AI Indexing: Keeps search indexes (like Elasticsearch) and AI vector stores in sync with the latest data.

- Transaction Monitoring: Useful for E-commerce and Fintech to track payments and inventory in real-time.

Pricing

- Free Tier: Generous free allowance (e.g., up to 10GB/month) for testing.

- Cloud Plan: $0.50/GB + fee per connector.

- Enterprise: Custom pricing for private deployments and advanced SLAs.

Who They’re Ideal For

Estuary Flow is excellent for engineering teams that need “Kafka-like” capabilities and reliable CDC but don’t want to manage the infrastructure. It fits startups and mid-market companies looking for speed-to-implementation and predictable costs.

Pros

- Simplicity: Consolidates ingestion, storage, and processing, reducing the “integration sprawl.”

- Backfill + Stream: Uniquely handles historical data and real-time data in one continuous flow.

- Developer Experience: Intuitive UI and CLI with good documentation for rapid setup.

Cons

- Younger Ecosystem: Fewer pre-built connectors compared to mature giants like Striim or Confluent.

- Documentation Gaps: As a newer platform, some advanced configurations may lack deep documentation.

- Limited Customization: The “opinionated” nature of the platform may be too restrictive for highly bespoke enterprise architectures.

Azure Stream Analytics

Azure Stream Analytics is Microsoft’s serverless real-time analytics service. It is deeply integrated into the Azure ecosystem, allowing users to run streaming jobs using SQL syntax without provisioning clusters.

Capabilities and Features

- Serverless: Fully managed PaaS; you pay only for the streaming units (SUs) you use.

- SQL-Based: Uses a familiar SQL language (extensible with C# and JavaScript) to define stream processing logic.

- Hybrid Deployment: Can run analytics in the cloud or at the “Edge” (e.g., on IoT devices) for ultra-low latency.

- Native Integration: One-click connectivity to Azure Event Hubs, IoT Hub, Blob Storage, and Power BI.

Key Use Cases

- IoT Dashboards: Powering real-time Power BI dashboards from sensor data.

- Anomaly Detection: Using built-in ML functions to detect spikes or errors in live data streams.

- Clickstream Analytics: Analyzing user behavior on web/mobile apps in real-time.

Pricing

Priced by “Streaming Units” (a blend of compute/memory) per hour. Standard rates apply, but costs can be unpredictable if job complexity requires scaling up SUs unexpectedly.

Who They’re Ideal For

This is the obvious choice for organizations already committed to the Microsoft Azure stack. It is perfect for teams that want to stand up streaming analytics quickly using existing SQL skills without managing infrastructure.

Pros

- Ease of Use: If you know SQL, you can write a stream processing job.

- Quick Deployment: Serverless nature means you can go from zero to production in minutes.

- Azure Synergy: Unmatched integration with other Azure services.

Cons

- Vendor Lock-in: It is strictly an Azure tool; not suitable for multi-cloud strategies.

- Cost Complexity: Estimating the required “Streaming Units” for a workload can be difficult.

- Advanced Limitations: Complex event processing patterns can be harder to implement compared to full-code frameworks like Flink.

Redpanda

Redpanda is a modern, high-performance streaming platform designed to be a “drop-in” replacement for Apache Kafka. It is written in C++ (removing the Java/JVM dependency) and uses a thread-per-core architecture to deliver ultra-low latency.

Capabilities and Features

- Kafka Compatibility: Works with existing Kafka tools, clients, and ecosystem—no code changes required.

- No Zookeeper: Removes the complexity of managing Zookeeper; it’s a single binary that is easy to deploy.

- Redpanda Connect: Includes extensive connector support (formerly Benthos) for building pipelines via configuration.

- Tiered Storage: Offloads older data to object storage (like S3) to reduce costs while keeping data accessible.

Key Use Cases

- Ultra-Low Latency: High-frequency trading, ad-tech, and gaming where every millisecond counts.

- Edge Deployment: Its lightweight binary makes it easy to deploy on edge devices or smaller hardware footprints.

Simplified Ops: Teams that want Kafka APIs but hate managing JVMs and Zookeeper.

Pricing

- Serverless: Usage-based pricing for easy starting.

- BYOC (Bring Your Own Cloud): Runs in your VPC but managed by Redpanda; priced based on throughput/cluster size.

Who They’re Ideal For

Redpanda is ideal for performance-obsessed engineering teams, developers who want a simplified “Kafka” experience, and use cases requiring the absolute lowest tail latencies (e.g., financial services, ad-tech).

Pros

- Performance: C++ architecture delivers significantly lower latency and higher throughput per core than Java-based Kafka.

- Operational Simplicity: Single binary, no Zookeeper, and built-in autotuning make it easier to run.

- Developer Friendly: Great CLI and tooling designed for modern DevOps workflows.

Cons

- Smaller Community: While growing fast, it lacks the decade-long community knowledge base of Apache Kafka.

- Feature Parity: Some niche Kafka enterprise features may not be 1:1 (though the gap is closing).

- Management UI: The built-in console is good but may not cover every advanced admin workflow compared to mature competitors.

Frequently Asked Questions About Data Streaming Platforms

- What’s the difference between a data streaming platform and a message queue? Data streaming platforms offer persistent, ordered event logs that multiple consumers can read independently, often featuring advanced capabilities such as complex event processing, stateful transformations, and built-in analytics. Traditional message queues typically delete messages after consumption and focus primarily on point-to-point messaging, lacking the same level of data retention and replayability.

- How do data streaming platforms handle schema evolution? Most modern platforms support schema registries that manage versioning and compatibility rules (e.g., Avro, Protobuf). These registries enforce checks when producers evolve their data structures, preventing breaking changes and ensuring downstream consumers don’t fail when a field is added or changed.

- What are the typical latency ranges for different platforms? Latency varies by architecture. High-performance platforms like Redpanda or Striim can achieve sub-millisecond to single-digit millisecond latencies. Traditional Kafka deployments typically operate in the 5-20ms range, while cloud-managed services may see 50-500ms depending on network conditions and configuration.

- How do you monitor streaming pipelines in production? Effective monitoring requires tracking key metrics like consumer lag (how far behind a consumer is), throughput (messages/sec), and error rates. Most platforms provide built-in dashboards, but enterprise teams often integrate these metrics into tools like Datadog, Prometheus, or Grafana.

- What are the security considerations? Security in streaming involves multiple layers: Encryption in transit (TLS/SSL), encryption at rest for persistent data, authentication (SASL/OAuth) for client connections, and authorization (ACLs/RBAC) to control who can read/write to specific topics. Compliance with standards like SOC 2 and GDPR is also a critical factor for enterprise selection.

![MCP [Un]plugged: Trust, Autonomy & MCP](https://striim.vibrilliant.com/wp-content/uploads/2024/06/striim_blog_backgrounds_AI_11.webp)

While this native system offers a basic form of CDC, it was not designed for the high-volume, low-latency demands of modern cloud architectures. The SQL Server Agent jobs and the constant writing to change tables introduce performance overhead (added I/O and CPU) that can directly impact your production database, especially at scale.

While this native system offers a basic form of CDC, it was not designed for the high-volume, low-latency demands of modern cloud architectures. The SQL Server Agent jobs and the constant writing to change tables introduce performance overhead (added I/O and CPU) that can directly impact your production database, especially at scale.