Customer expectations have evolved beyond simply receiving timely responses. Consumers now expect personalized experiences that make every interaction with a brand feel personal and relevant.

To meet these rising expectations, businesses are investing in real-time customer analytics—a strategic approach that enables them to understand, predict, and respond to customer behavior as it happens. In fact, according to a Gartner survey, nearly 80% of companies are increasing their investments in customer experience initiatives to stay competitive in the digital age. The result? An enhanced customer experience that drives loyalty, revenue growth, and sustainable success.

The Importance of Delivering Instant, Personalized Experiences

Generic messaging isn’t appealing to today’s customers — they expect more. They want to feel understood, valued, and personally connected to brands. Imagine visiting a website that recognizes your unique preferences and offers suggestions that truly resonate with your lifestyle. Instead of encountering one-size-fits-all content, the experience adapts to you—highlighting products that complement your previous choices or even tailoring messages to suit your local context and current environment.

This personalized touch transforms the way you interact with a brand. It creates a sense of ease and relevance, making you feel like the brand truly “gets” you. When every interaction feels thoughtfully designed around your needs, it not only enhances your shopping journey but also builds trust and fosters loyalty. In a world where time is precious and options are abundant, these tailored experiences become the key to turning a casual browser into a dedicated customer.

Now, let’s dive into how real-time data and analytics tie in.

How Real-Time Data Directly Contributes to Customer Experience

Real-time analytics is only as effective as the data it relies on. To truly transform customer interactions, brands must harness up-to-the-minute information that reflects every nuance of customer behavior. Without this dynamic input, any attempt at personalization risks being outdated by the time it reaches the customer. Real-time data empowers companies to analyze interactions across various channels—whether online or in-store—and immediately adjust the experience to meet individual needs. This agility can be the difference between a one-size-fits-all approach and a truly engaging, bespoke customer journey.

This instant personalization is built on a well-structured data strategy that combines three key types of data:

- First-Party Data: This is data directly collected from your owned channels, such as your website and mobile apps.

- Second-Party Data: Sourced from trusted partners who share insights from their interactions with customers, this data helps broaden your understanding while reinforcing direct customer feedback.

- Third-Party Data: Acquired from data aggregators, this information can enrich your insights, offering a broader market perspective. However, it must be used judiciously, especially in light of evolving privacy regulations.

By integrating these diverse data sources, companies can transform raw information into actionable insights. Every customer touchpoint—be it browsing a website, receiving an email, or visiting a store—can be optimized in real time, ensuring that each interaction is as engaging and relevant as possible.

Yet, while the benefits of real-time data are clear, many companies still struggle with the necessary infrastructure. Legacy systems, siloed databases, and outdated analytics tools often impede the swift collection, processing, and application of data.

Without a modern, agile data infrastructure, even the best personalization strategies can falter, resulting in delayed interactions and missed opportunities to connect with customers when it matters most. To fully leverage real-time data for a superior customer experience, businesses must invest in robust, scalable systems that can keep pace with the rapid flow of information in today’s digital landscape.

Enhancing the Entire Customer Journey

A holistic view of the customer journey is crucial in today’s competitive landscape. Real-time analytics offers a comprehensive look at every step a customer takes—from initial awareness to post-purchase engagement. This continuous flow of data allows companies to identify bottlenecks, understand how customers interact with various touchpoints, and make immediate improvements where needed.

For example, if analytics reveal that a particular webpage is causing customers to drop off during the checkout process, a real-time alert can prompt the team to investigate and optimize the page—whether by simplifying the form, improving the user interface, or even offering a live support chat. Similarly, journey reports and attribution analyses help trace the paths that lead to successful conversions, enabling brands to replicate positive experiences across other channels.

By continuously monitoring the customer journey and making data-driven adjustments, companies can ensure a smoother, more engaging experience that evolves alongside customer needs.

How to Implement Real-Time Analytics to Improve Customer Experience

Transitioning to real-time analytics might seem like a daunting, resource-intensive task, but a strategic, phased approach can make the process manageable and highly effective.

Here’s how to begin.

Start with High-Impact Use Cases

Focus initially on the areas where real-time data can make the most significant impact—such as personalization and loyalty. This allows your team to see immediate benefits and build internal support for broader initiatives.

Integrate Across Channels

Ensure your data infrastructure can handle inputs from various sources—online interactions, in-store purchases, mobile app engagements, and more. A unified view of customer behavior is key to delivering truly personalized experiences.

Leverage Scalable Platforms

Platforms like Striim offer robust solutions that combine data ingestion, processing, and analytics in one place. These tools are designed to grow with your needs, helping you integrate third-party data where appropriate and maintain compliance with evolving privacy standards.

Continuous Optimization

Use the insights gained from real-time data not just to react, but to proactively enhance the customer journey. Experiment with different loyalty strategies, test new personalization tactics, and refine your approach based on what the data tells you.

Looking Ahead: The Future of Customer Analytics

As technology advances, real-time analytics is poised to become even more integral to customer experience strategies. The evolution of AI and machine learning is enabling businesses to not only react to customer behavior but also predict it. This predictive capability means that brands are starting to anticipate customer needs before they arise, offering proactive recommendations and solutions that further enhance satisfaction and loyalty.

Emerging technologies, such as the Internet of Things (IoT), are also broadening the spectrum of available data. By integrating IoT devices, companies can gain insights into customer behavior in physical spaces—such as tracking in-store movements or monitoring product interactions—thereby adding another layer of depth to the customer experience.

In this new era, success is defined by the ability to blend data-driven insights with human creativity, crafting experiences that feel both personalized and authentic.

The Role of AI in Real-Time Analytics

By combining AI with real-time analytics with integrative platforms like Striim in parallel with AI-ready cloud data warehouses like Snowflake, businesses can create hyper-personalized, adaptive experiences that drive deeper customer connections and long-term loyalty.

Real-World Example: Morrisons

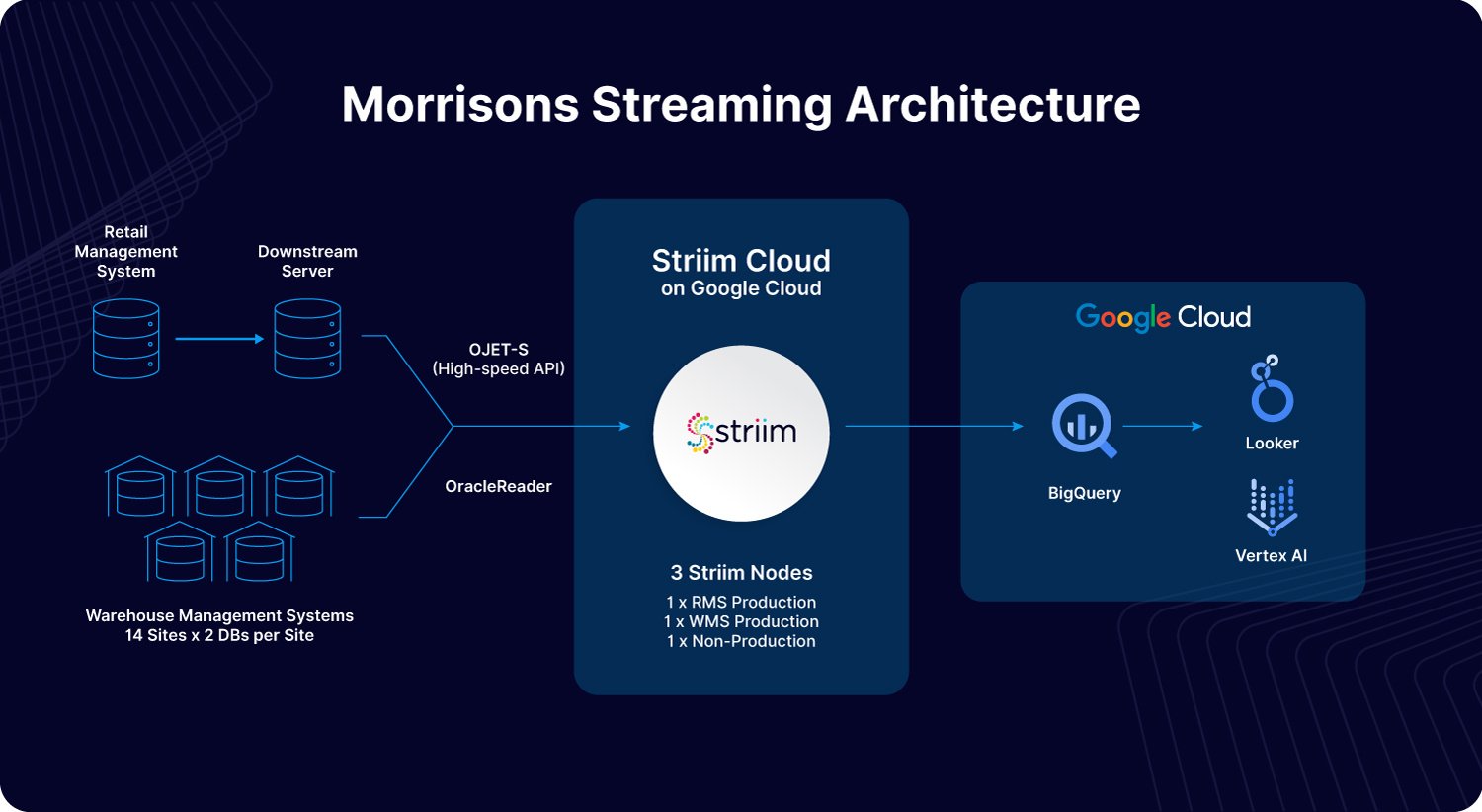

Morrisons, one of the UK’s largest supermarket chains, has embraced real-time analytics to elevate its customer experience. By integrating critical data from its Retail Management System (RMS) and Warehouse Management System (WMS) into Google BigQuery via Striim, Morrisons now gains immediate visibility into stock levels and product availability.

This shift from batch processing to real-time data access enables the company to promptly identify and resolve inventory issues, optimize replenishment, and ensure that shelves are consistently stocked. As a result, customers enjoy a more reliable and satisfying shopping experience—whether they’re shopping in-store or online—with up-to-date product information and timely promotions that cater to their needs.

The Future of Customer Experience is Here

Real-time analytics is no longer a futuristic concept—it is the foundation of modern customer engagement. By enabling instantaneous personalization and a continuously optimized customer journey, real-time analytics helps brands build lasting, meaningful relationships with their customers.

For companies looking to embark on this journey, starting small and building on high-impact use cases can pave the way for a comprehensive transformation. With strategic tools and platforms available today, the path to delivering truly exceptional customer experiences is clearer than ever. Ready to discover how Striim can help your business leverage real-time data and analytics to enhance customer experience? Get a demo today.