With the help of real-time machine learning (ML) analytics, it’s possible to overhaul your decision-making processes to be more efficient, accurate, and fast.

Thanks to advanced real-time ML analytics, you can gain access to personalized recommendations, leverage continuous performance monitoring, harness the power of predictive analytics, and more — all in real time. As a result, your business becomes more agile and gains an advantage over slow-moving, outdated competitors.

In this blog, we’ll walk you through everything you need to know about utilizing advanced real-time ML to make better business decisions. As a result, you’ll be equipped with the knowledge and tools necessary to take your company to the next level.

What is Real-Time ML Analytics?

Real-time ML analytics refers to the process of applying ML algorithms to data as it is created, enabling businesses to derive insights and make decisions in near real-time. Contrary to traditional methods, such as batch processing where data is collected, stored, and analyzed at a later time, with real-time processing there’s no delay even for high-velocity data sets.

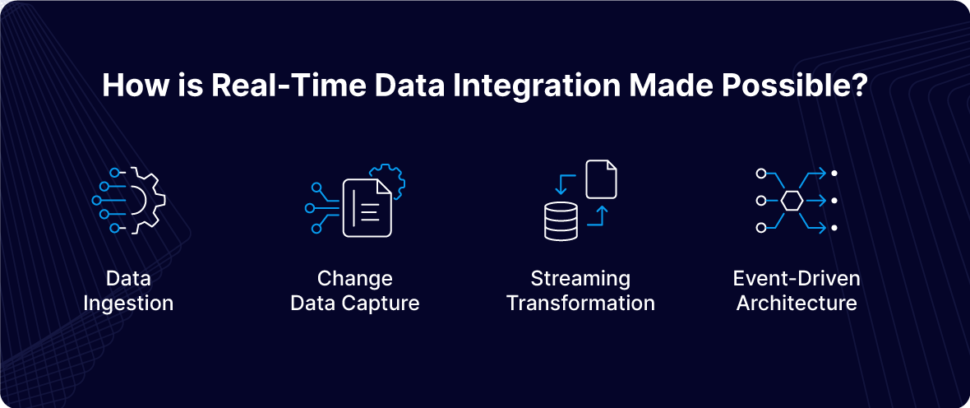

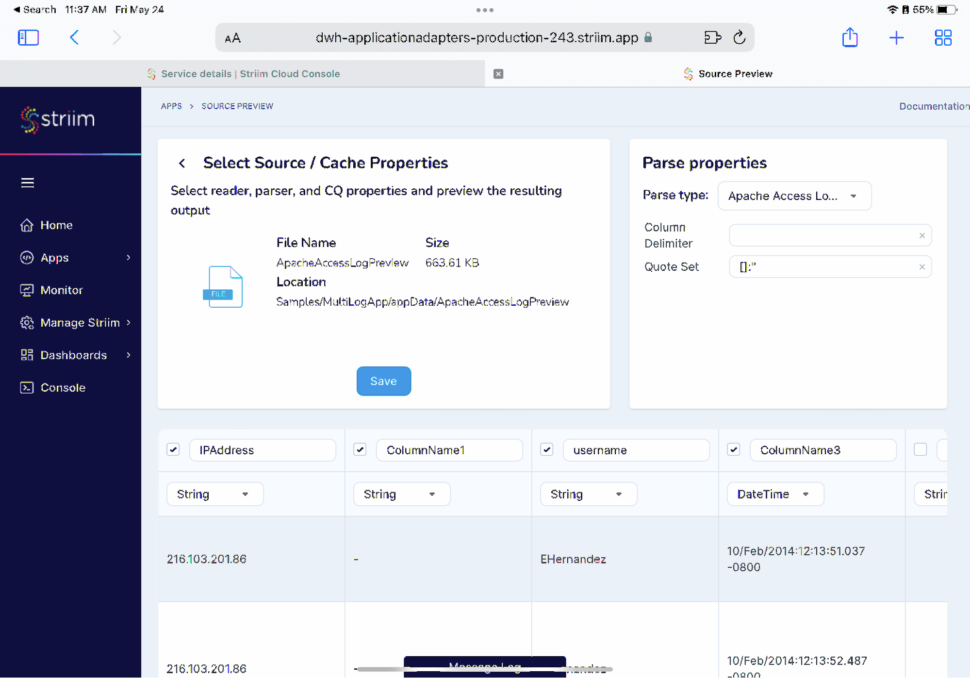

The first step in real-time ML analytics is data ingestion, where data from various sources, such as Internet of Things (IoT) devices, social media, transaction systems, and logs, is continuously collected. This data must be ingested with minimal latency to ensure it is available for immediate processing.

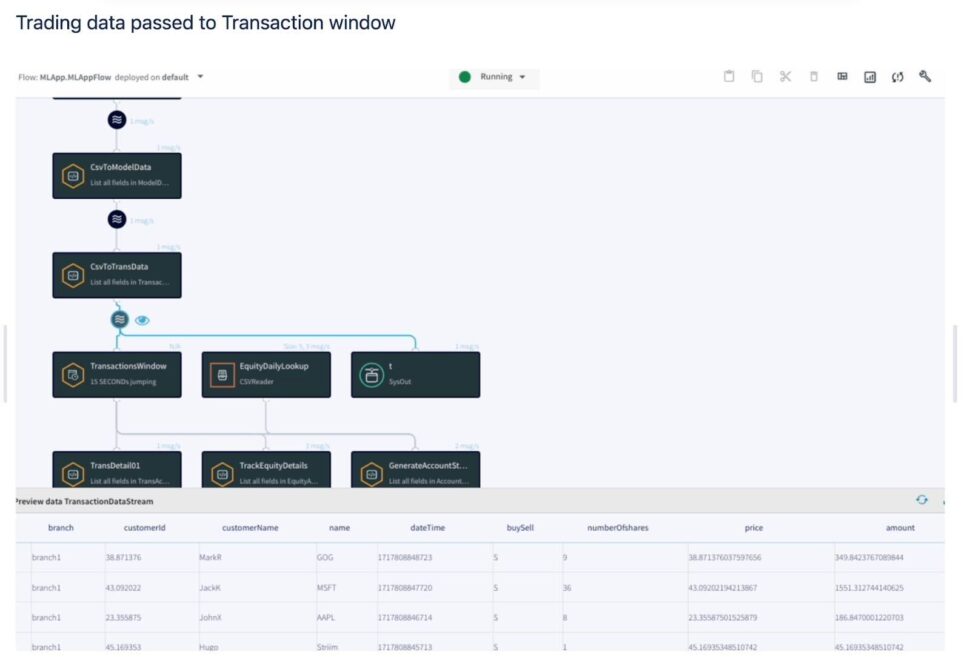

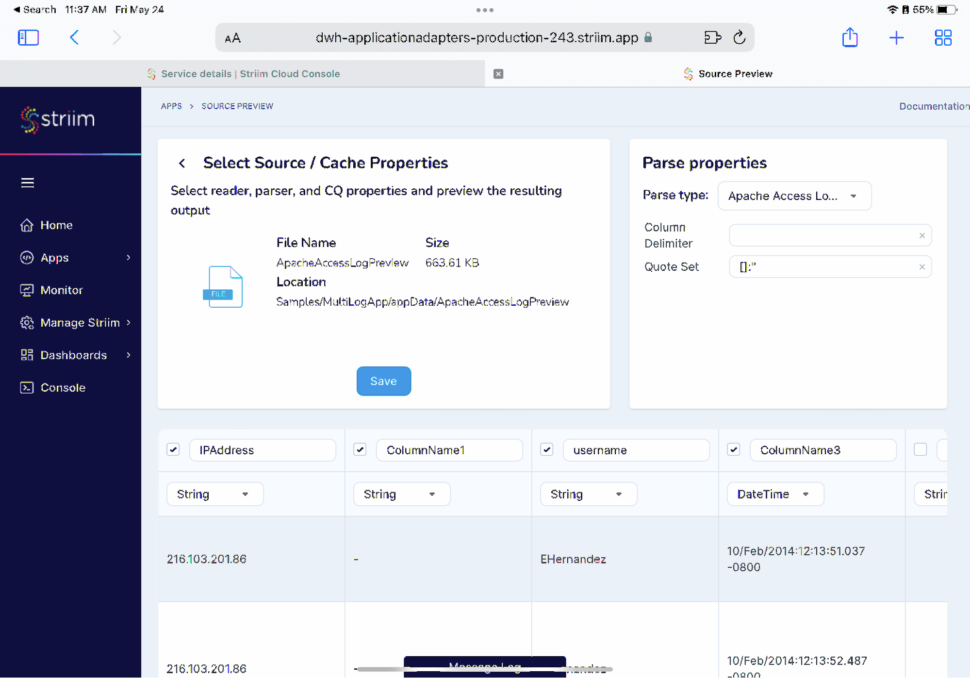

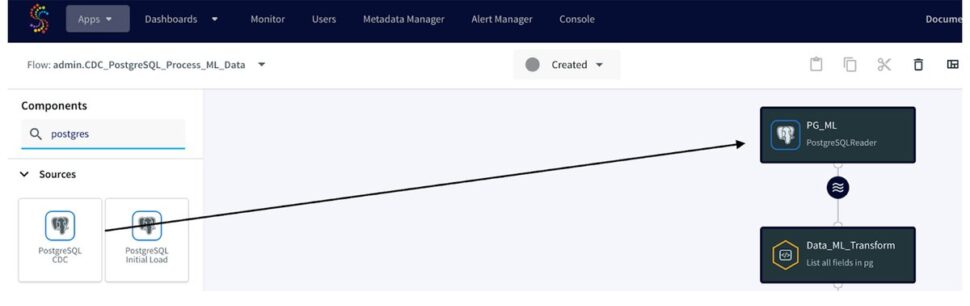

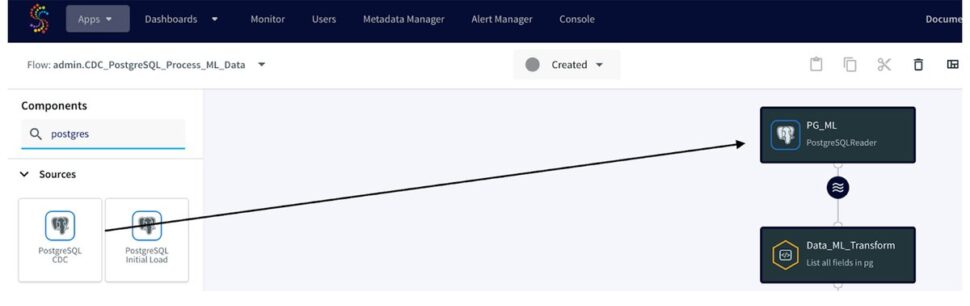

For instance – you can use Striim’s suite of 150 real-time, streaming connectors to ingest parse data from Databases (via log-based CDC), IoT network protocols, and unstructured log data.

Once ingested, the data needs to be processed to extract relevant features for the ML models. This involves a robust process of examining, cleaning, transforming, and interpreting data so that the data is in a usable format. The rigorous process of cleaning and normalization ensures that you have confidence in both data quality and consistency. This ultimately provides confidence in the data-fueled decisions your team makes.

After processing, the data is fed into ML models for inference. In real-time analytics, pre-trained models are typically used to ensure rapid predictions. These models can be anything from simple regression models to complex neural networks, depending on the application. The insights derived from the ML models are then used to make decisions.

These decisions can be automated, such as triggering an alert for fraudulent activity or adjusting the price of a product in real-time, or they can be presented to human operators for further analysis and action. Machine learning models detect and identify patterns or anomalies. Then, insights are translated into actionable recommendations for your team. This process is facilitated by a feedback loop mechanism which enables continuous model improvement.

To learn more about leveraging real-time data processing for machine learning by leveraging Striim, check out this guide. You’ll discover how connect to the source database (using PostgreSQL in the example), dive deeper into creating Striim Continuous Query Adapters, learn how to attach CQ to BigQuery Writer adapter, and even execute the CDC data pipeline to replicate the data to BigQuery. From there, you can build a BigQuery ML model.

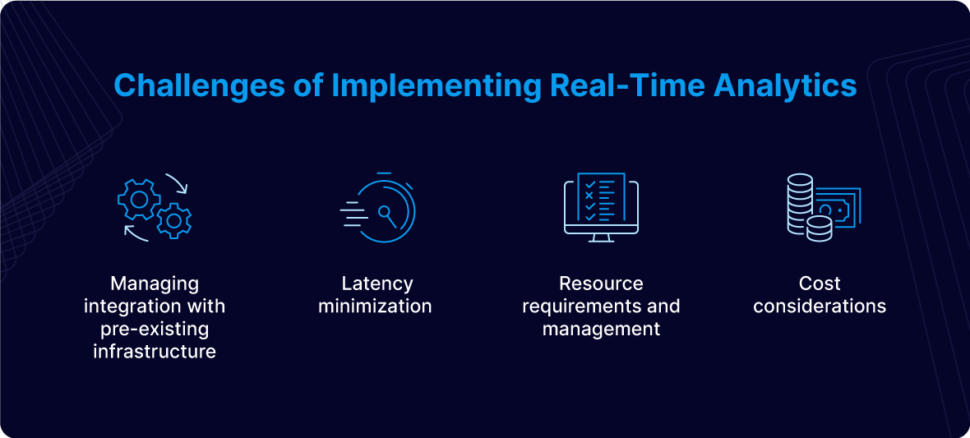

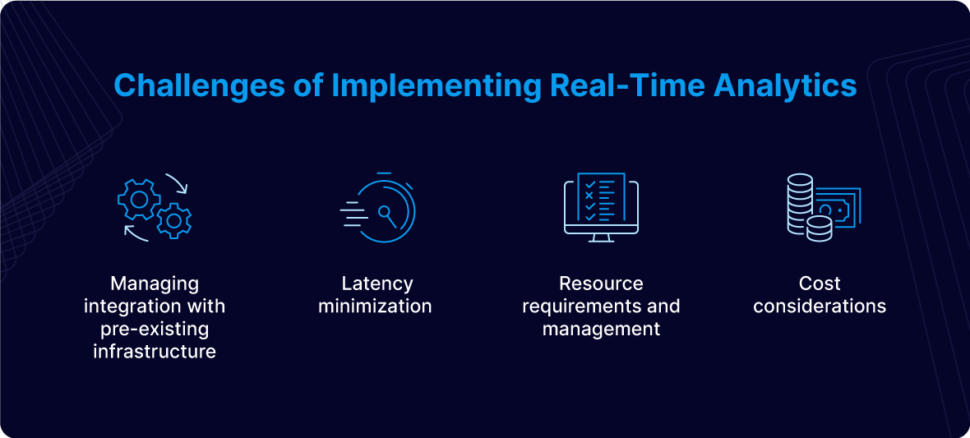

Challenges of Implementing Real-Time Analytics

While real-time ML analytics offer your business significant benefits, implementation can be challenging at first. By gaining awareness of what issues may arise, you’re better equipped to proactively handle any situations that come your way.

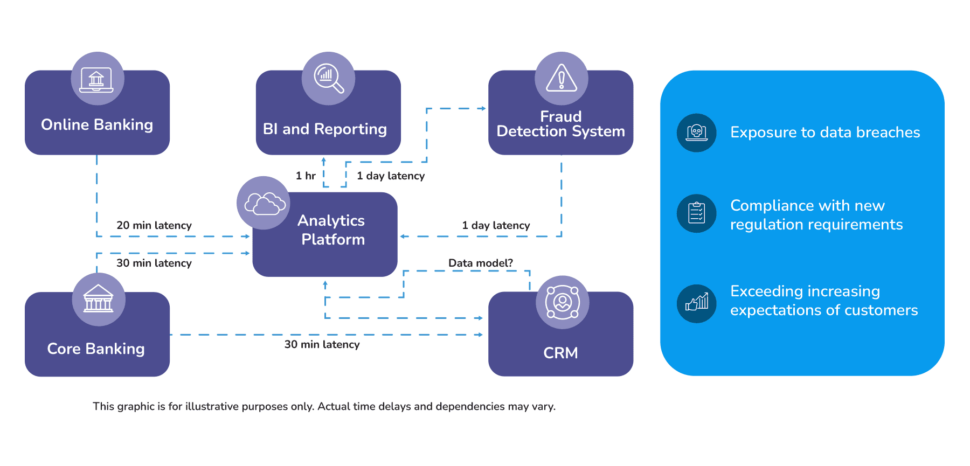

- Managing integration with pre-existing infrastructure: Integrating real-time analytics with existing IT architecture often requires modifications. Legacy systems may not be designed for high-throughput data streams, necessitating updates or replacements. Ensuring seamless integration involves reconfiguring data pipelines, updating APIs, and sometimes overhauling entire segments of the IT infrastructure to support continuous data flow and processing.

- Latency minimization: Achieving low latency is critical for real-time analytics, requiring optimization across several components. High-speed network infrastructure minimizes data transmission delays, while efficient algorithms and parallel processing techniques reduce computational delays. Additionally, using in-memory databases or fast SSD storage expedites data retrieval and writing operations, further reducing overall latency.

- Resource requirements and management:Real-time analytics demand substantial computational resources. High processing power, memory, and storage are required to handle continuous data streams. Effective resource management includes auto-scaling capabilities to dynamically allocate resources based on demand, ensuring performance while controlling costs.

- Cost considerations: The financial aspect of implementing real-time analytics can be significant. Initial setup costs include purchasing hardware, software licenses, and possibly cloud services. Ongoing maintenance costs cover updates, scaling, and monitoring infrastructure. Cost optimization strategies such as utilizing cloud services with pay-as-you-go models or leveraging open-source tools can help manage expenses.

Integrating ML Algorithms into Your Business’s Data Strategy

To fully reap the benefits of real-time ML analytics to garner a competitive advantage, you’ll have to integrate ML algorithms into your data strategy from the get-go. Here’s how to achieve this.

Whether the focus is on refining customer experiences, optimizing operational efficiency, or fine-tuning product recommendations, syncing technical efforts with these main objectives is crucial. This ensures that the integration of ML is not a mere technical pursuit but a deliberate effort to yield meaningful impact and drive ROI.

Data quality enhancement is fundamental to your data strategy, involving detailed assessment and preprocessing techniques. These ensure that the data used for model training is not only substantial but also reliable, laying the groundwork for accurate insights. The core of this integration lies in algorithm selection and optimization, finding the right balance between efficiency and accuracy to extract valuable insights that influence the bottom line.

Scalable model training enhances capabilities that directly impact business operations. Automation and integration with DevOps practices streamline model deployment, emphasizing efficiency in decision-making and ongoing maintenance. Addressing bias becomes important, ensuring ethical and equitable outcomes that fit with business values.

Empowering teams with comprehensive training bridges the gap between methodologies and business acuity. Continuous model enhancement via reinforcement learning ensures accurate adaptation to changing business environments. Real-time monitoring and KPIs provide a pragmatic view of the impact on the business outcomes and ROI. Iterative scaling and optimization conclude the integration, ensuring not just technical efficiency but cost-effectiveness.

Regardless of your overarching objectives for integrating ML algorithms into your organization’s data strategy, it’s necessary to ensure that your technical efforts are aligned with your primary business goals. Alignment guarantees that you are effectively leveraging ML to empower your team’s decision-making processes. We recommend you look holistically at your data strategy to ensure you’re effectively integrating ML algorithms into your workflow.

How to Use Real-Time ML Analytics to Make Better Business Decisions

Ready to learn how to use real-time ML analytics to make better, faster business decisions? We’ll walk you through everything you need to know.

Gain access to real-time predictive analytics.

One of the best ways to improve your decision-making process is by gaining access to predictive analytics. Striim’s Slack and Teams alerts connectors enhance predictive analytics by enabling real-time alerts. This facilitates seamless communication and ensures that critical insights reach the right teams instantly.

By leveraging predictive analytics, your team can utilize ML algorithms to analyze data the moment it is generated. Because of this, your organization is equipped with the ability to anticipate events before they occur, as well as take proactive steps to improve the likelihood of a desired outcome. As fresh data arrives, the algorithms are capable of updating model parameters in real time to ensure that predictions stay accurate and relevant — even in the most dynamic environment.

Leverage continuous performance monitoring.

If you’ve ever wished you could detect an anomaly the moment it occurs, your wish has been granted by real-time ML analytics. When your team integrates ML analytics into the performance monitoring systems in place, you can garner invaluable insights into key metrics and performance indicators.

From there, you can make proactive adjustments. Ultimately, this provides your team with the resources necessary to rapidly make the right decisions for your business.

Offer personalized recommendations in real time.

Whether you’re in the retail/CPG space, healthcare, or otherwise, you know how crucial personalized recommendations are. Now, you can utilize advanced algorithms to offer up customized product or content suggestions to enhance the customer experience. This may result in an engagement boost, an improved likelihood of conversions, and greater customer loyalty.

Predict customer churn and act accordingly beforehand.

Let’s circle back to real-time ML analytics for predictive purposes. Did you know that you can also use it to predict customer churn and take action before it occurs? Instead of being in a reactive position, ML analytics put your business in the most desirable position possible: Being able to act proactively. This allows you to make decisions that will safeguard your existing customers and garner new interest, too.

Optimize your supply chain more seamlessly.

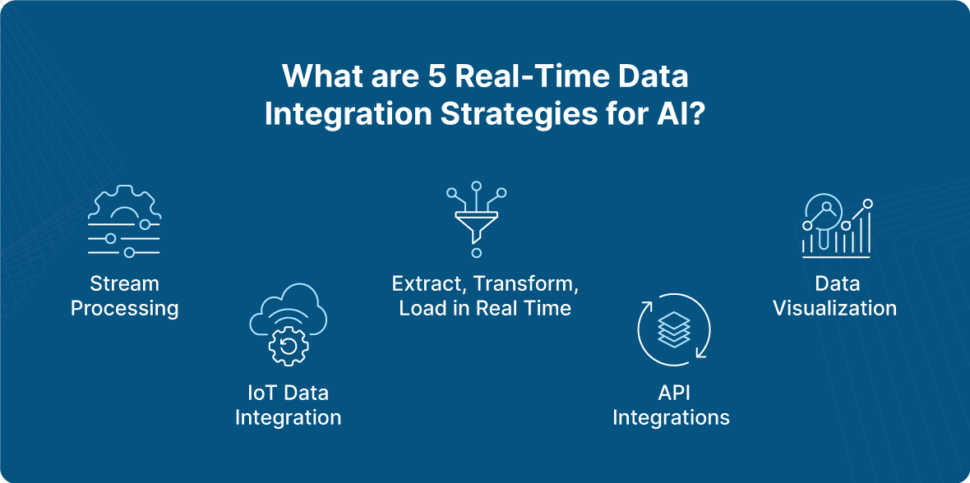

In the past, supply chain optimization was a cumbersome, clunky process. Not anymore. With the assistance of new analytics, you can let the data do the heavy lifting for you. Now, your team can analyze data from several sources such as inventory levels, demand forecasts, and even transportation routes.

With the help of predictive algorithms, you can anticipate when fluctuations in demand will occur, as well as label potential disruptions. From there, you can work to solve issues before they arise — again putting your business in a better place to succeed.

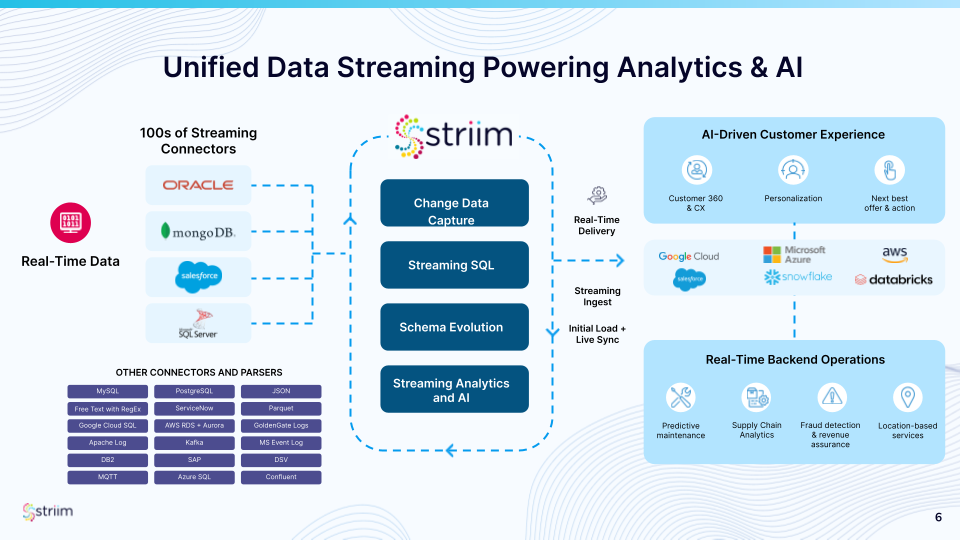

Striim Empowers Real-Time ML Analytics

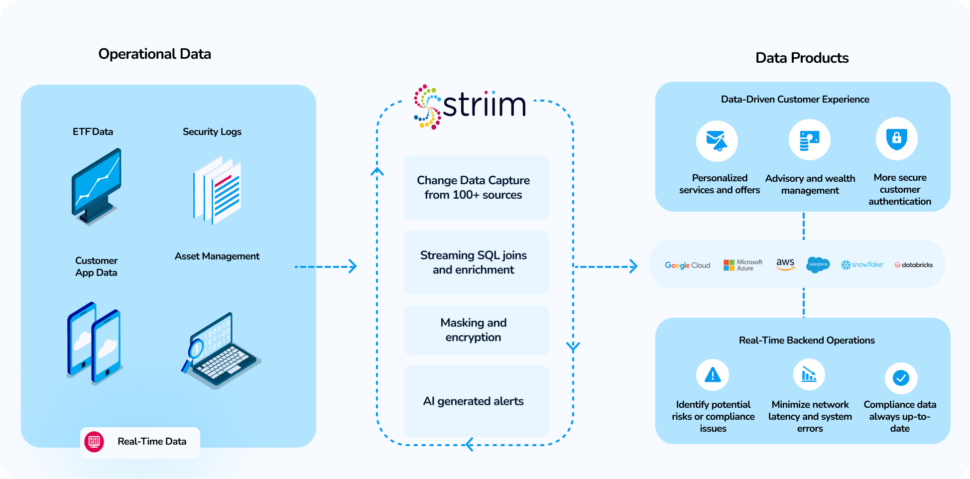

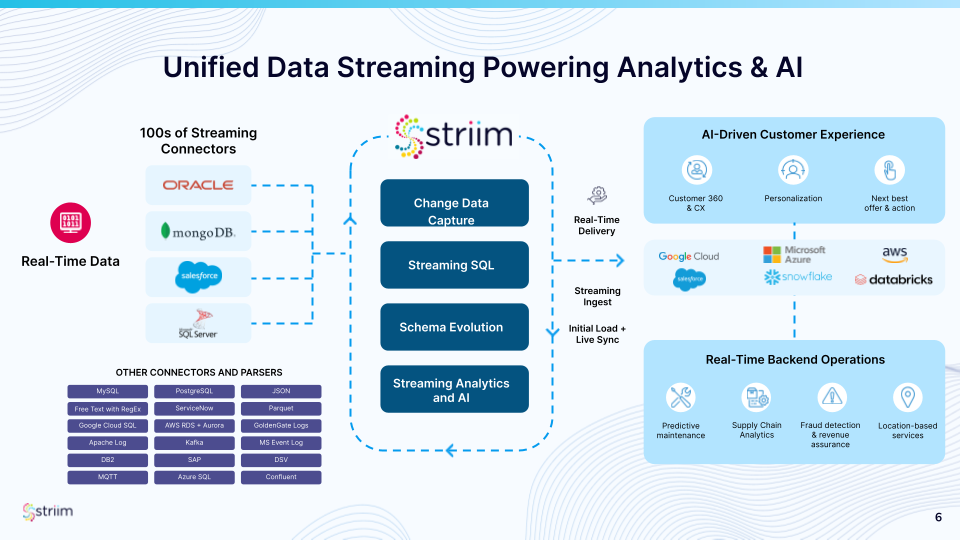

Striim enhances real-time machine learning analytics with advanced capabilities like Change Data Capture (CDC) for low-latency data ingestion from diverse sources, and Streaming SQL for on-the-fly data transformation and feature engineering. It seamlessly handles schema changes, ensuring uninterrupted data pipelines. Striim integrates with major cloud platforms (Google Cloud, Azure, AWS, Snowflake, Databricks) for scalable deployment. It supports wide-ranging connectors, providing a unified data stream for accurate analytics. Striim’s continuous data synchronization and high-throughput ingestion enable real-time insights for applications such as predictive maintenance, supply chain optimization, fraud detection, and personalized customer experiences.

How Do Companies Use AL & ML in the Real World?

Wondering what this looks like in practice? We’ve got you covered. Here are some examples of how companies use AI and ML in their business practices to unlock a new level of success.

Manufacturing

For manufacturing companies, there are no substitutes for the insights that ML algorithms provide. ML algorithms, typically powered by IoT devices or sensors, can continuously monitor equipment health. This enables them to anticipate failures which minimizes downtime and supercharges productivity. As a result, businesses are better able to make decisions around maintenance.

Healthcare

Healthcare providers can identify subtle patterns that indicate disease with the assistance of ML models especially in medical imaging. Furthermore, by utilizing predictive analytics, healthcare providers can anticipate the trajectory of patient health prognosis which helps streamline the creation of personalized treatment plans.

Finance

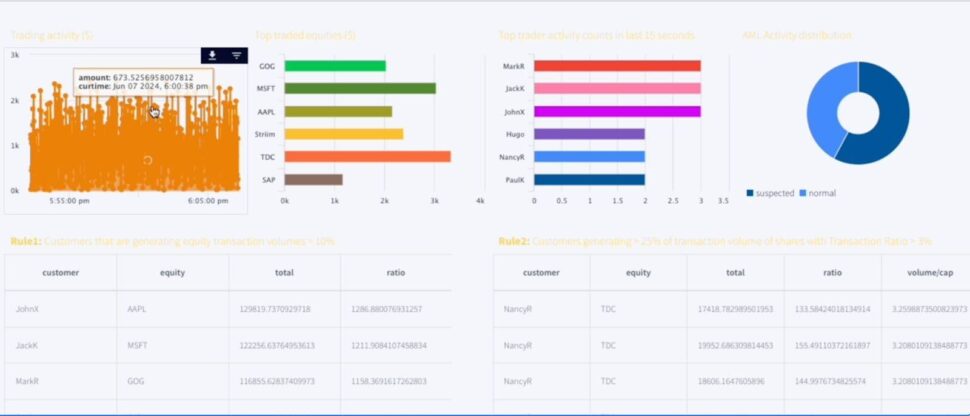

In the finance industry, timely fraud detection is critical. Now, teams can utilize ML algorithms that learn from historical data to identify patterns associated with fraudulent activity. Thanks to real-time monitoring, financial institutions can better detect anomalies and make timely decisions to react to suspected fraud.

Telecom

Yet another way to leverage data is within the telecom industry, where teams use AL and ML to predict customer churn. ML models are considerate of factors including customer usage patterns, demographic, customer service interactions, and even billing history. These tools can identify customers that are at risk of churn, and companies can intercept them with targeted retention strategies. (Think: Personalized offers, or better customer support.)

Retail

In today’s highly volatile marketplace, leveraging dynamic pricing is critical for maintaining financial viability. Utilizing ML algorithms, your team can analyze a multitude of factors that influence pricing such as competitor rates, inventory levels, historical sales data, and customer behavior patterns. By enabling real-time price adjustments, these ML-driven insights empower your team to respond swiftly to market fluctuations, ultimately optimizing profitability.

Want to learn more about building an AI-driven data strategy? Download the whitepaper.

Striim Enables Your Business to Make Better Data-Driven Decisions

Ready to take your first steps towards utilizing real-time ML analytics to make better decisions for your business? Striim offers continuous data integration and ML-fueled analytics that streamline decision-making. Our platform helps your team leverage unprecedented insights and enables your organization to gain a competitive edge even in the most dynamic, rapidly changing environments. Ready to try it yourself? Get a free trial and discover how Striim can up-level your business decision-making process.