On-premises-to-cloud migration is the necessary first step to cloud adoption, which offers a fast lane to data infrastructure modernization, innovation, and the ability to rapidly transform business operations. But many companies still restrict themselves to using the cloud for non-critical projects, rather than mission-critical operations, out of concern over the difficulties and the risks of migration. Are you one of them? If so, read on to discover a new approach that addresses critical data migration challenges.

Common Risks of On-Premises-to-Cloud Migration

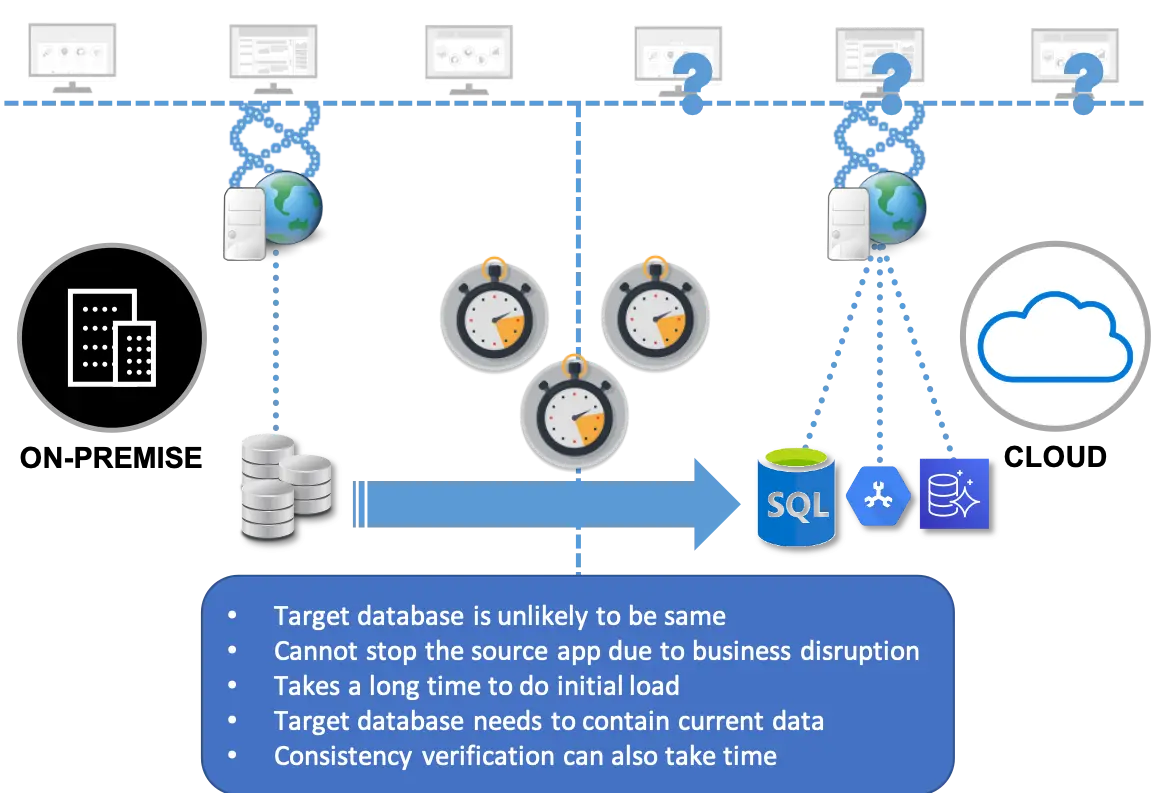

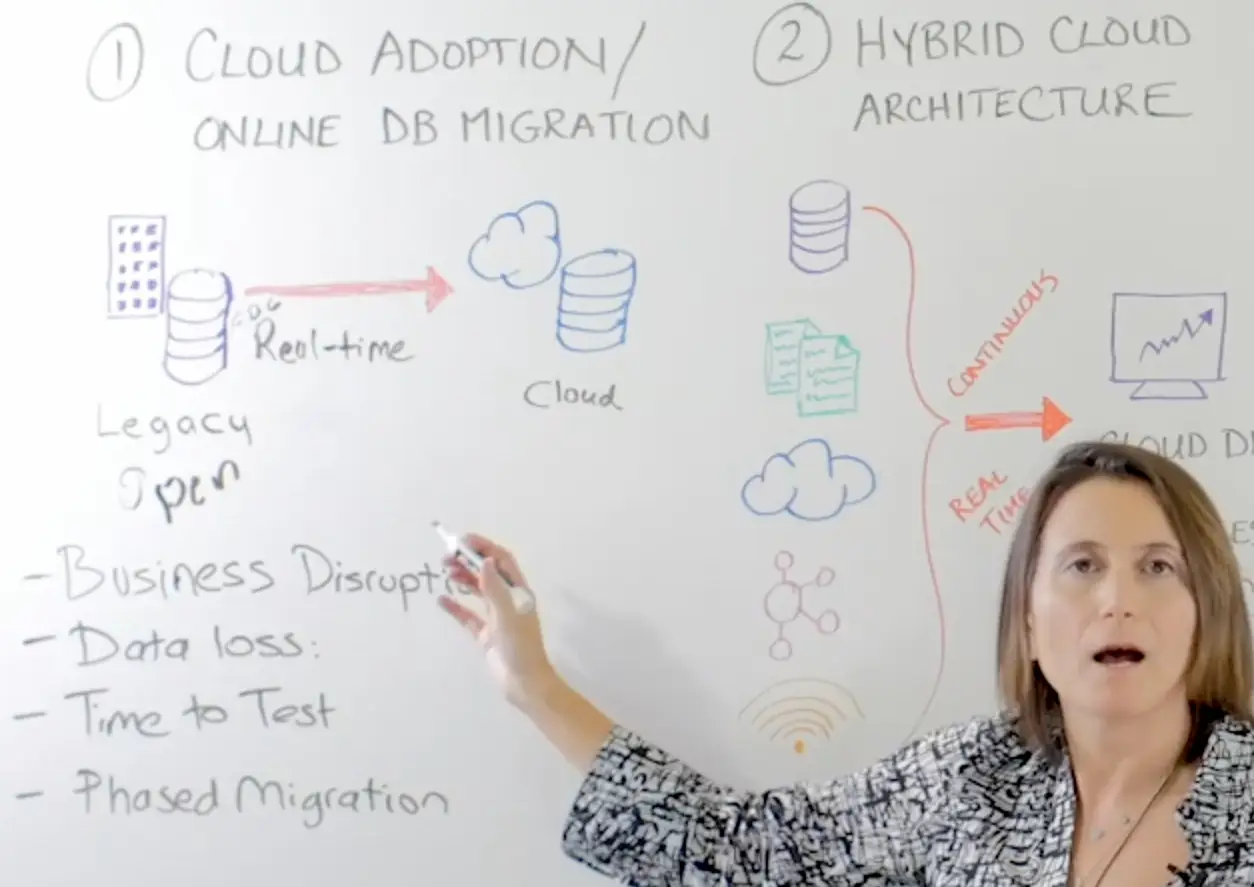

A major component of the cloud migration effort is data migration from existing legacy databases. Many data migration solutions require you to lock the legacy database to preserve the consistent state after a snapshot is taken.

Depending on the size of the database, network bandwidth, and required transformations, the whole process for loading the data to the cloud, restoring the database, and testing the new system can take days, weeks, or even months. I am not aware of any digital business that would be good with locking databases that support critical business operations for such an extensive time.

In addition, you run the risk of having a database with an inconsistent state after the migration process. Some solutions might lose data in transit because of a process failure or network outage. Or the data might not be applied to the target system in the right transactional order. As a result, your cloud database winds up diverging from the source legacy system.

To ensure that the new environment is stable, you have to test the new system thoroughly before moving all your users over. Time pressures to minimize downtime can lead to rushed testing, which in turn results in an unstable cloud environment after you do a big bang switchover. Certainly, not the goal of your modernization effort!

It is no wonder with all these risks and disruptions to operations, the systems that should move to the cloud as the top priority – because they can bring the greatest positive impact for business transformation – end up being de-prioritized in favor of less risky migrations. As a result, your organization may fail to extract the full value from your cloud investment and limit the speed of innovation and modernization.

Mitigating the Risks of On-Premises-to-Cloud Migration

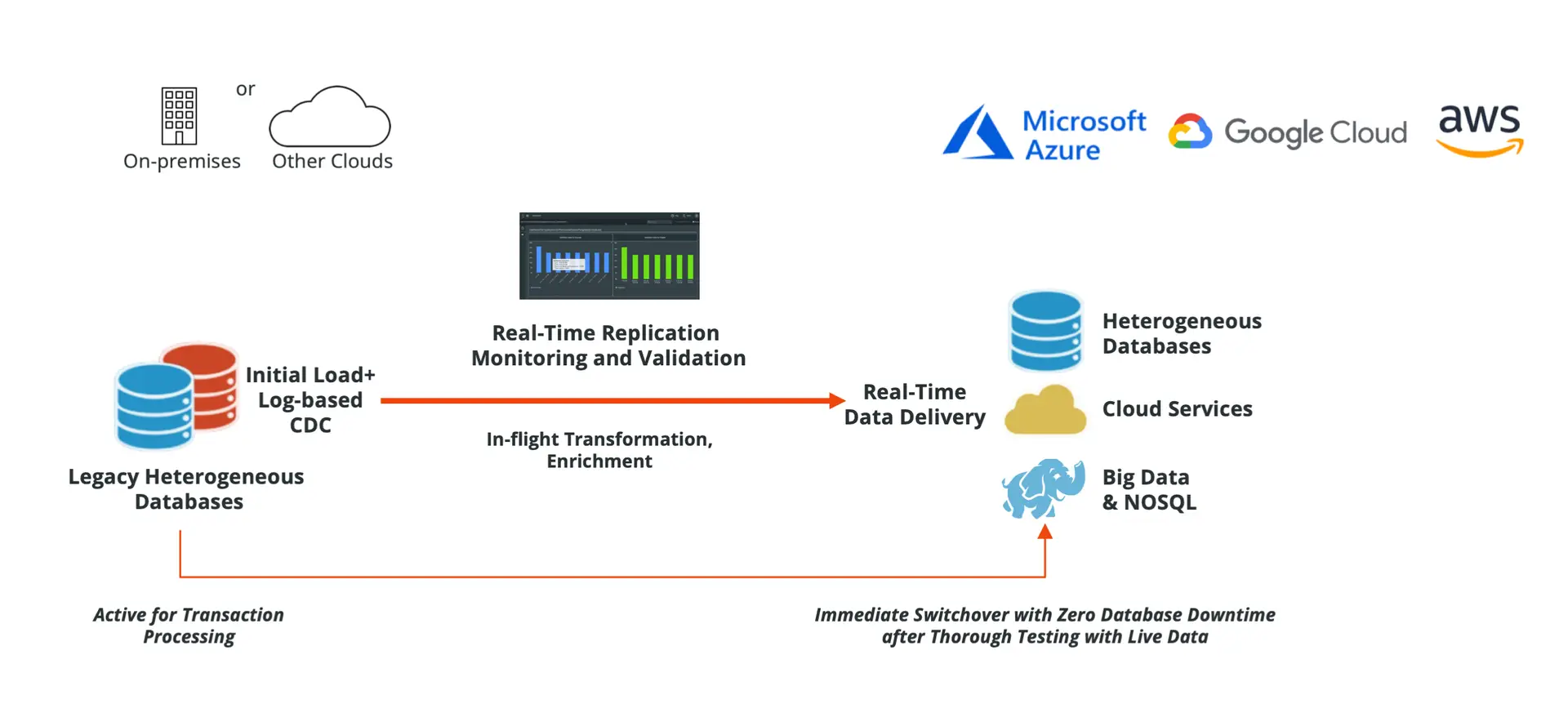

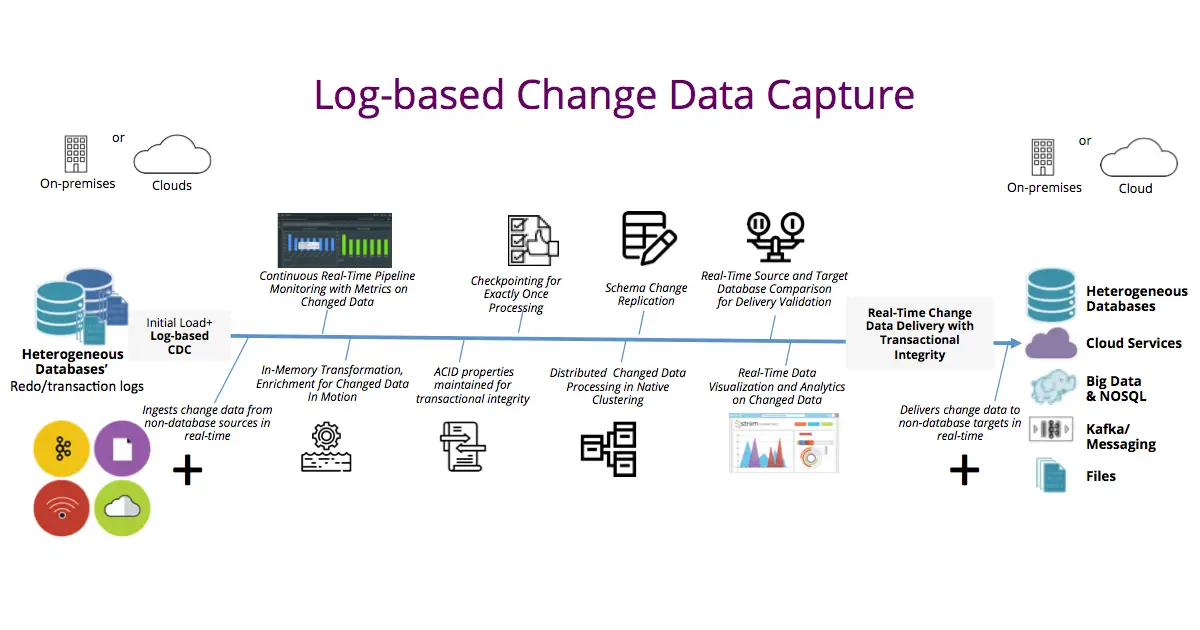

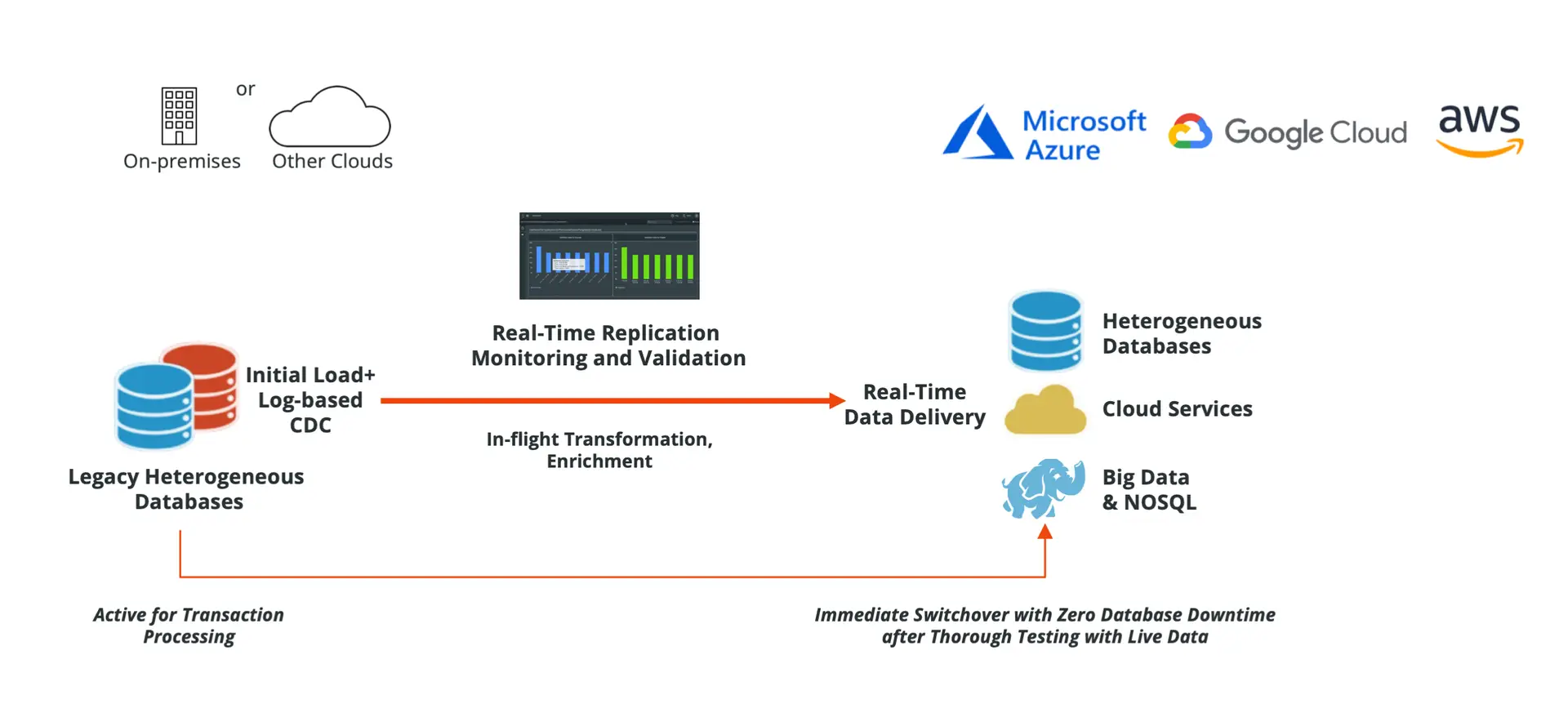

Here comes the good news that I love sharing: Today, newer, more sophisticated streaming data integration with change data capture technology minimizes disruptions and risks mentioned earlier. This solution combines initial batch load with real-time change data capture (CDC) and delivery capabilities.

As the system performs the bulk load, the CDC component collects the changes in real time as they occur. As soon as the initial load is complete, the system applies the changes to the target environment to maintain the legacy and cloud database consistent.

Let’s review how the streaming data integration approach tackles each of these risks that delay your business in getting the fullest benefits from your cloud investments.

Eliminating Database Downtime

Combining bulk load with CDC removes the need to pause the legacy database. During the bulk load process, your database is open to any new transactions. All new transactions are immediately captured and applied to the target as soon as the bulk load is complete, keeping the two systems in-sync.

The only downtime for the migration process occurs during the application switchover process. Therefore, this configuration enables zero database downtime during on-premises-to-cloud migration.

Avoiding Data Loss

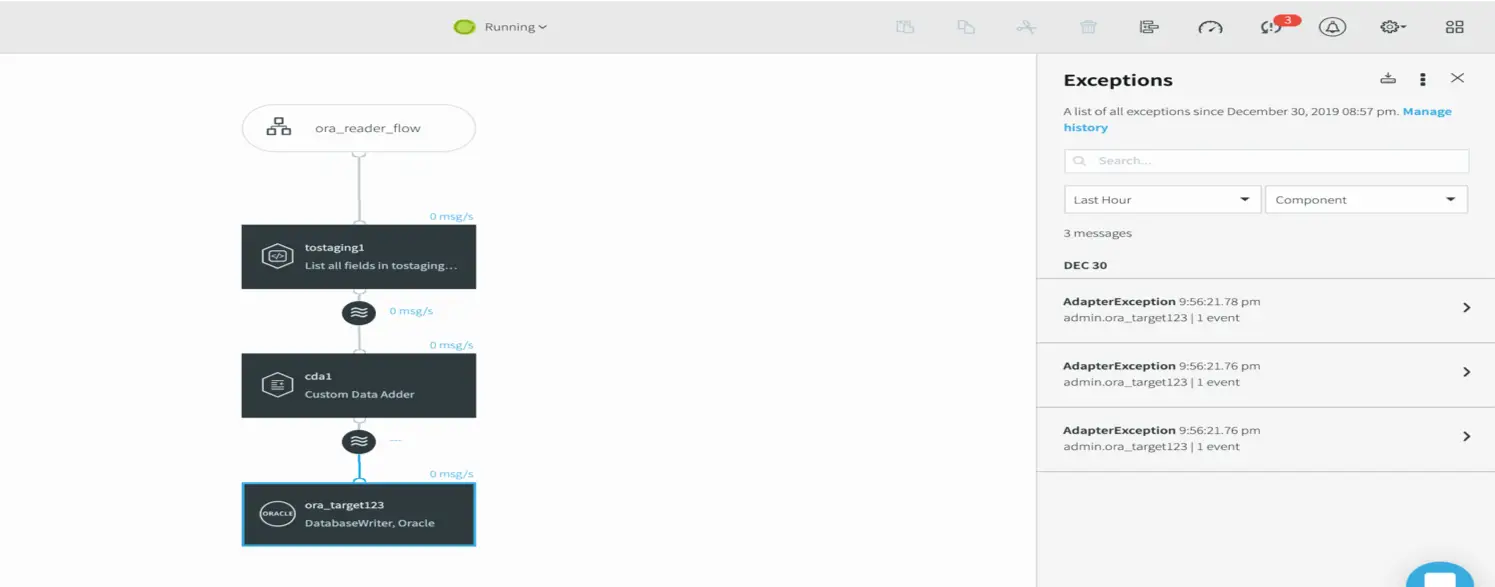

To prevent data loss throughout the data migration process, streaming data integration tracks data movement and processing. Striim’s streaming data integration platform provides delivery validation that all your data has been moved to the target.

Also, with built-in exactly once processing (E1P), the software platform can avoid data duplicates. Striim’s CDC offering is designed to maintain the transaction integrity (i.e., ACID properties) during the real-time data movement so the target database remains consistent with the source.

Thorough Testing Without Time Limitation

Because during and after the initial load, CDC keeps up with transactions happening in the legacy system, your team can take the time necessary to thoroughly test the new system before moving users. Having live production data in the cloud database, combined with unlimited testing time, provides the comprehensive assessments and assurances that many mission-critical systems need for such a significant transition.

Fallback Option

After the switchover, performing reverse real-time data movement from the cloud database back to the legacy database enables you to keep the legacy system up-to-date with the new transactions taking place in the cloud. In short, if necessary, you have a fallback option to put everyone back on the old system as you troubleshoot any issues in the new system.

During this troubleshooting and retesting time, the CDC process can be set up to collect the new transactions happening in the legacy database to bring the cloud database to a consistent state with the legacy system. You can point the application to the cloud database, once again, after testing thoroughly.

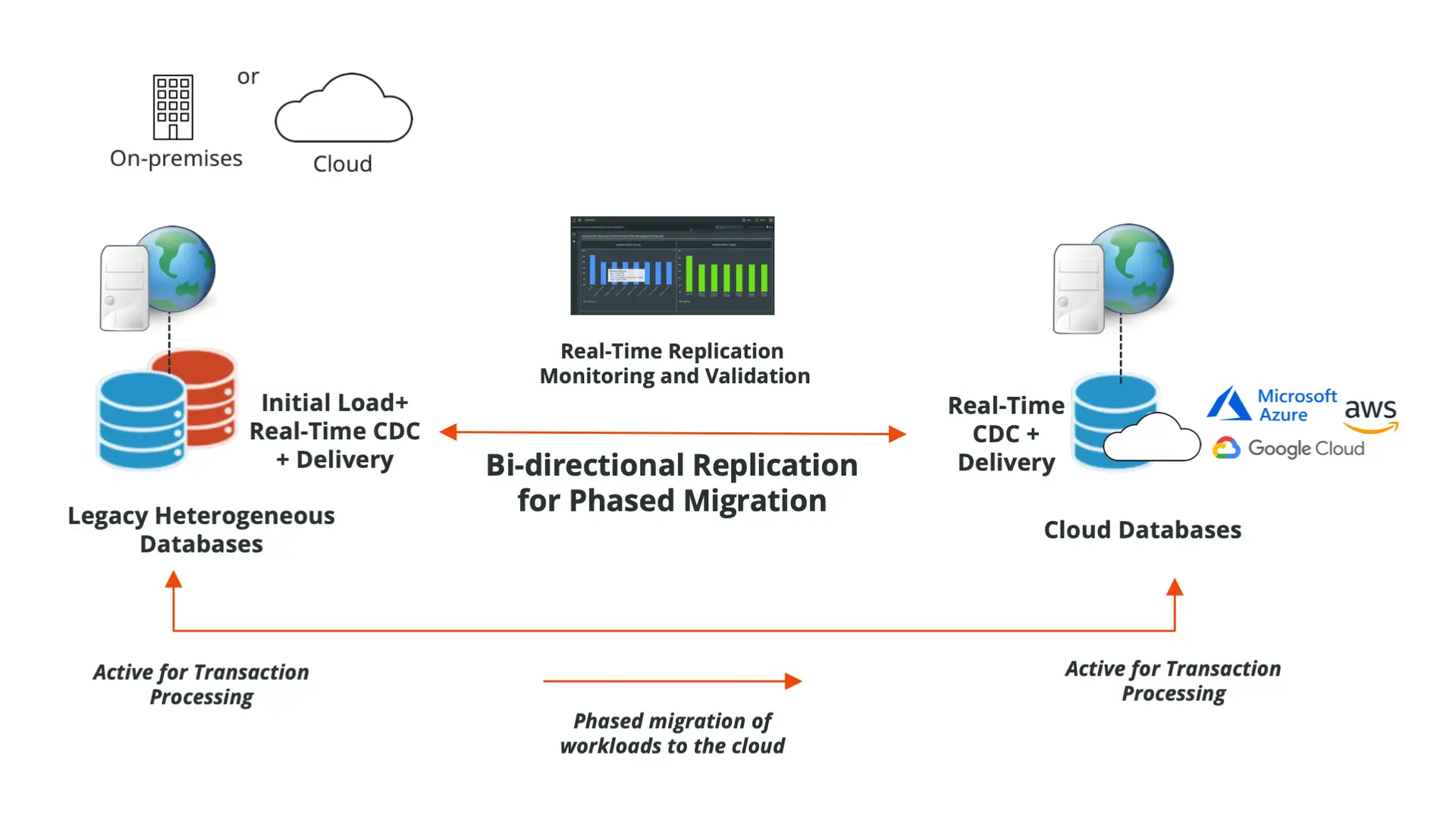

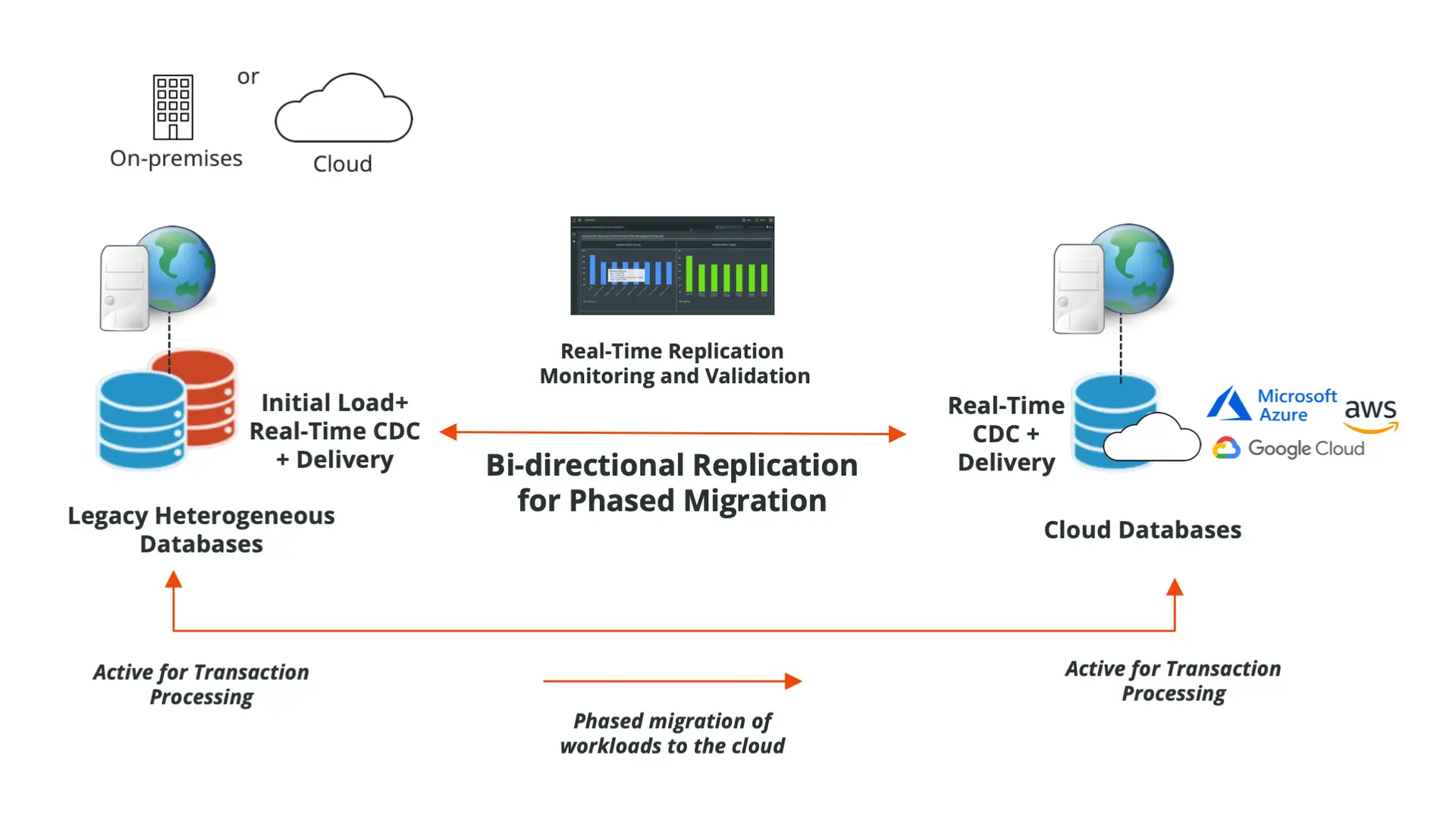

Phased Migration with Parallel Use

A more complex but highly effective approach to further facilitate your risk mitigation and thorough testing is a gradual migration. Bi-directional real-time data replication is your solution to keep both the cloud and the on-premises legacy systems in-sync while they are both open to transactions and support the application.

You can move some users to the new system and leave others in the old, running both in parallel as you test the new system. As the testing with the production workload progresses as planned, you can add new users in a phased and gradual manner that minimizes risks.

Migration Is Only the First Step

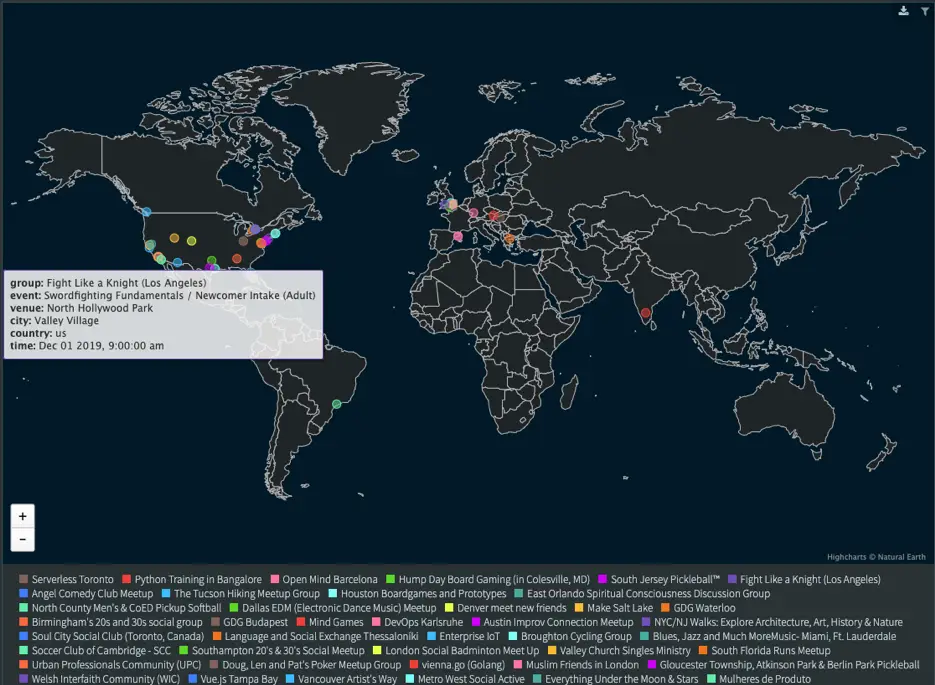

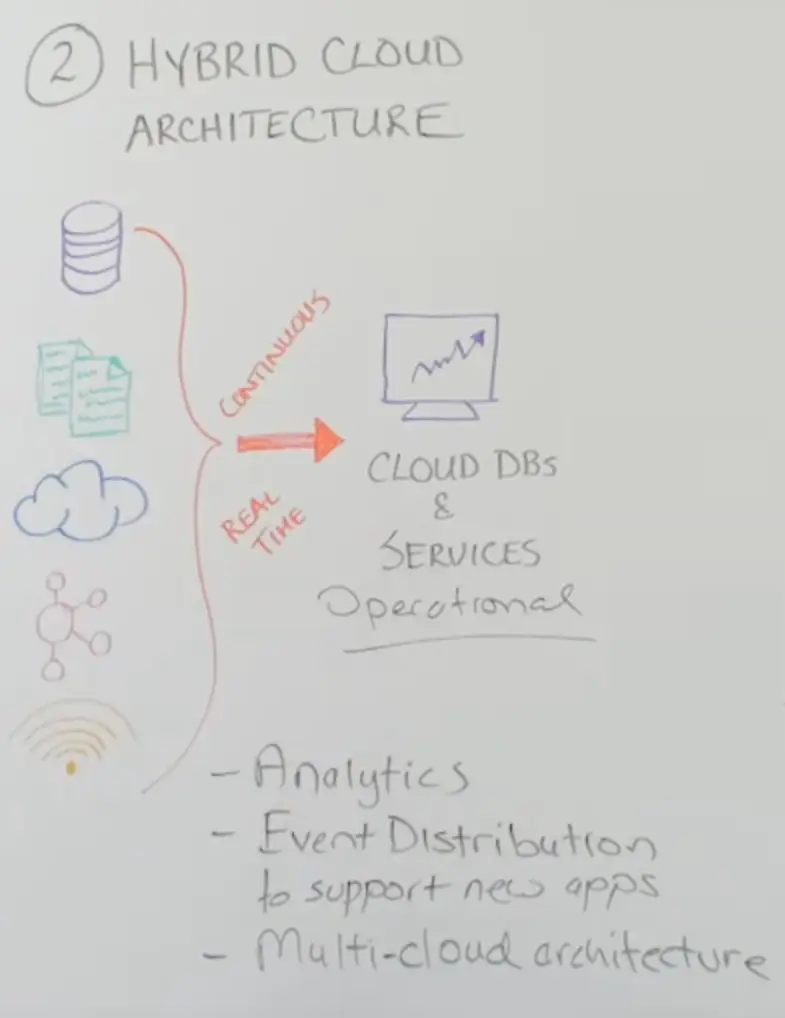

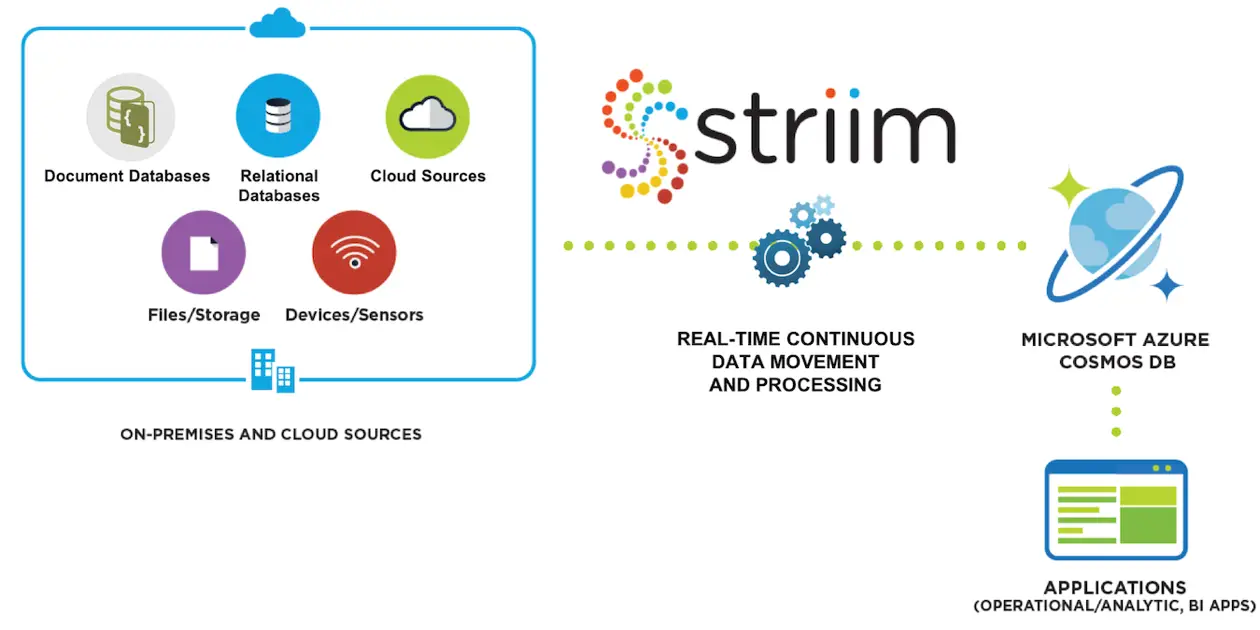

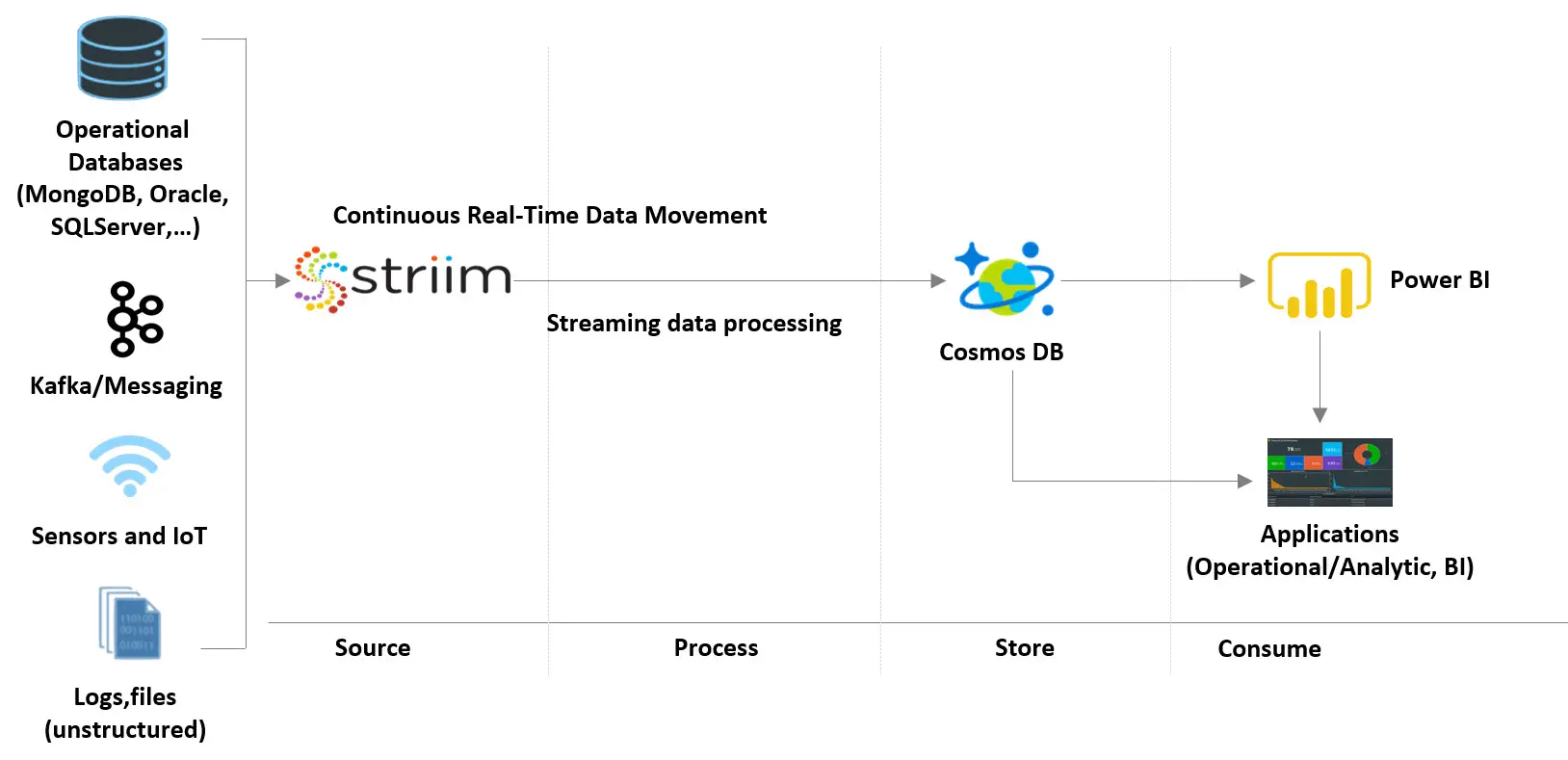

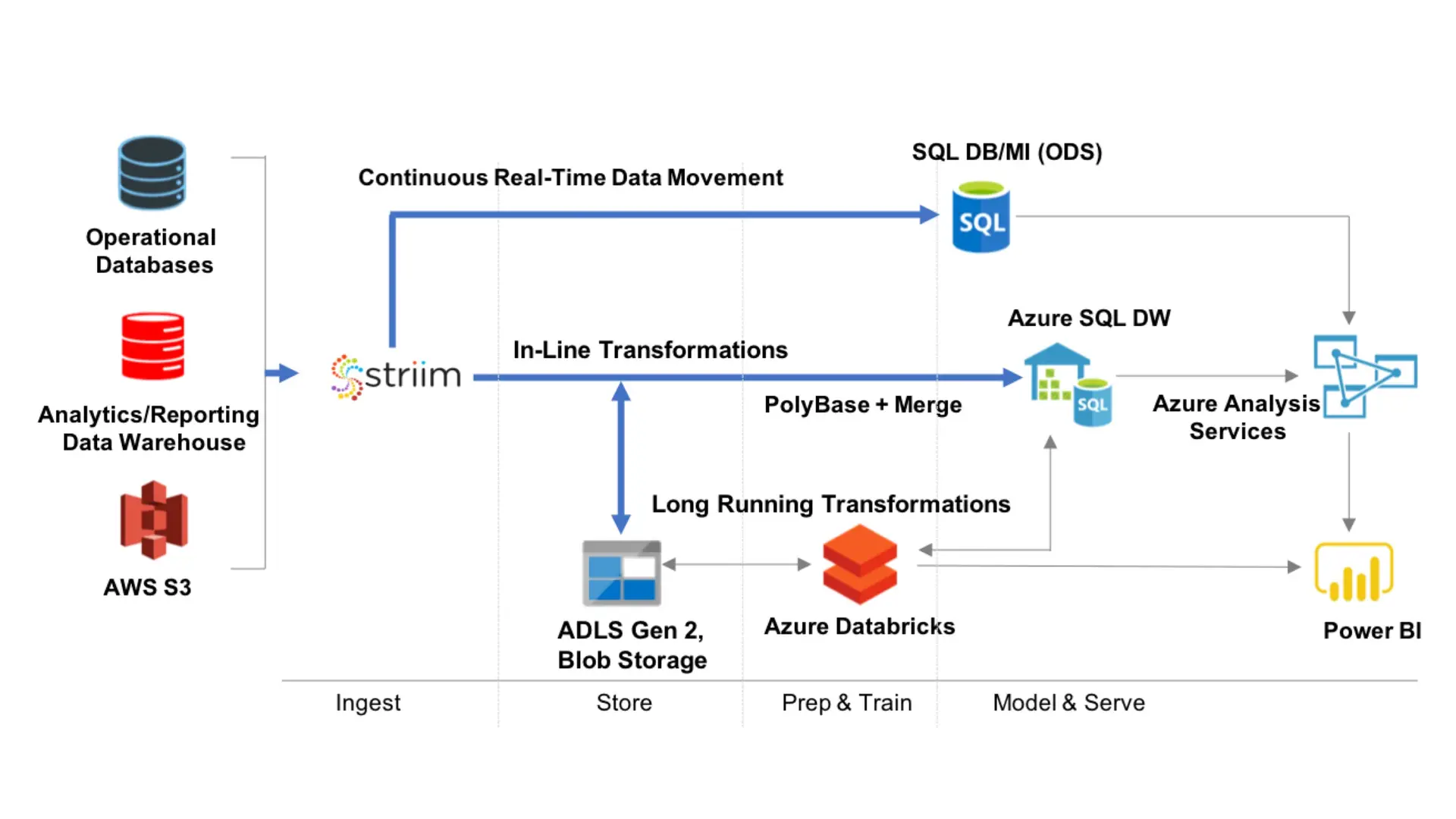

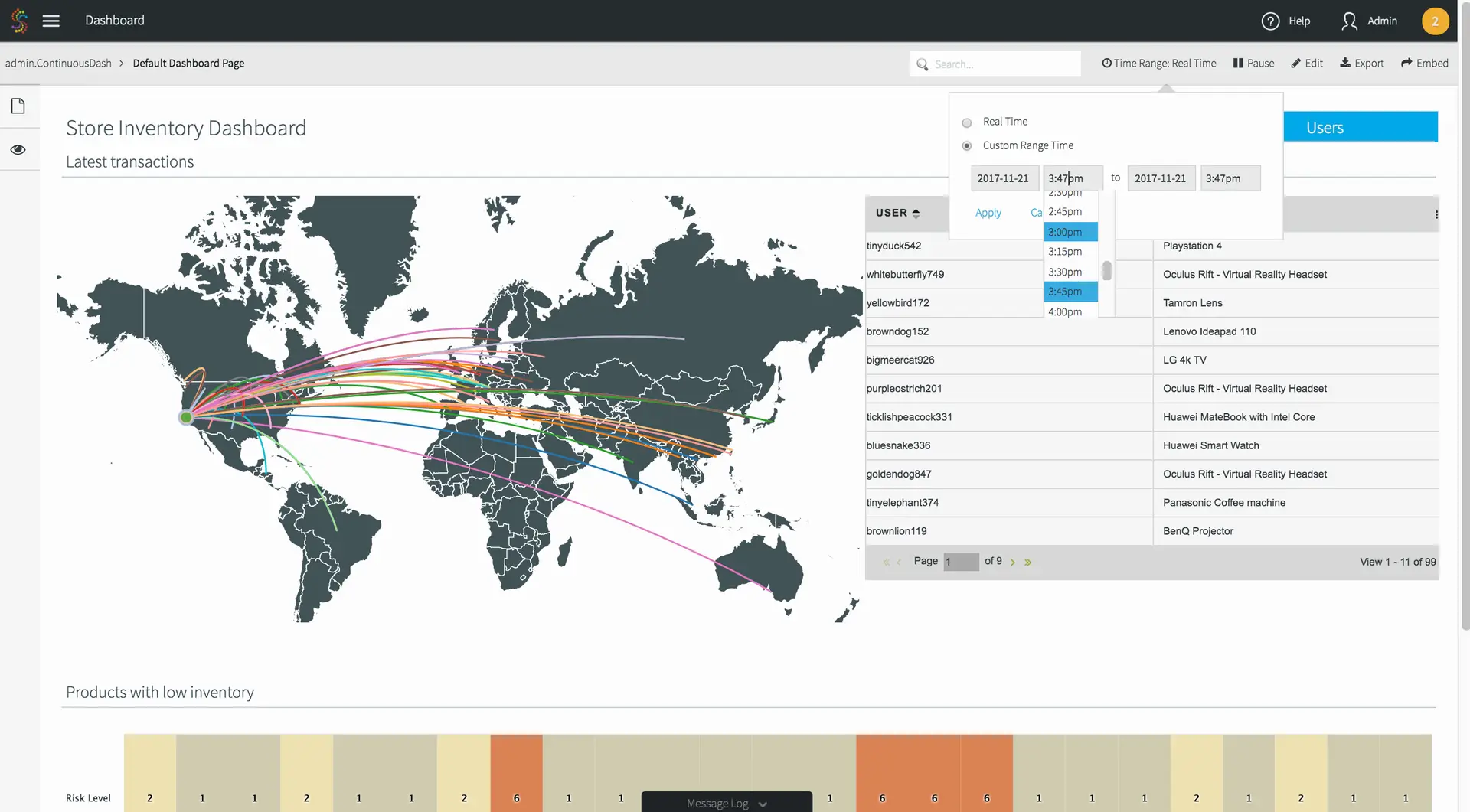

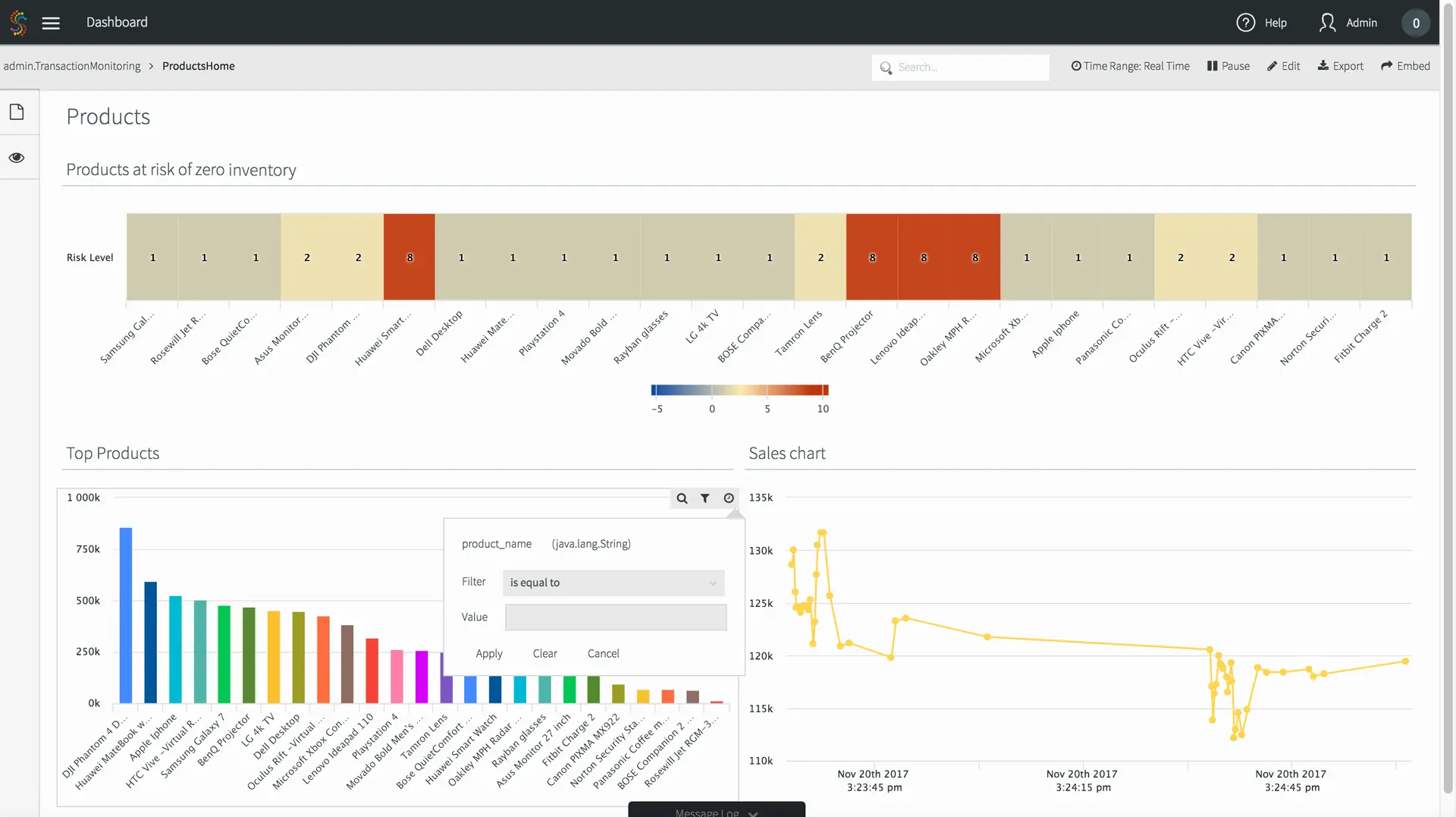

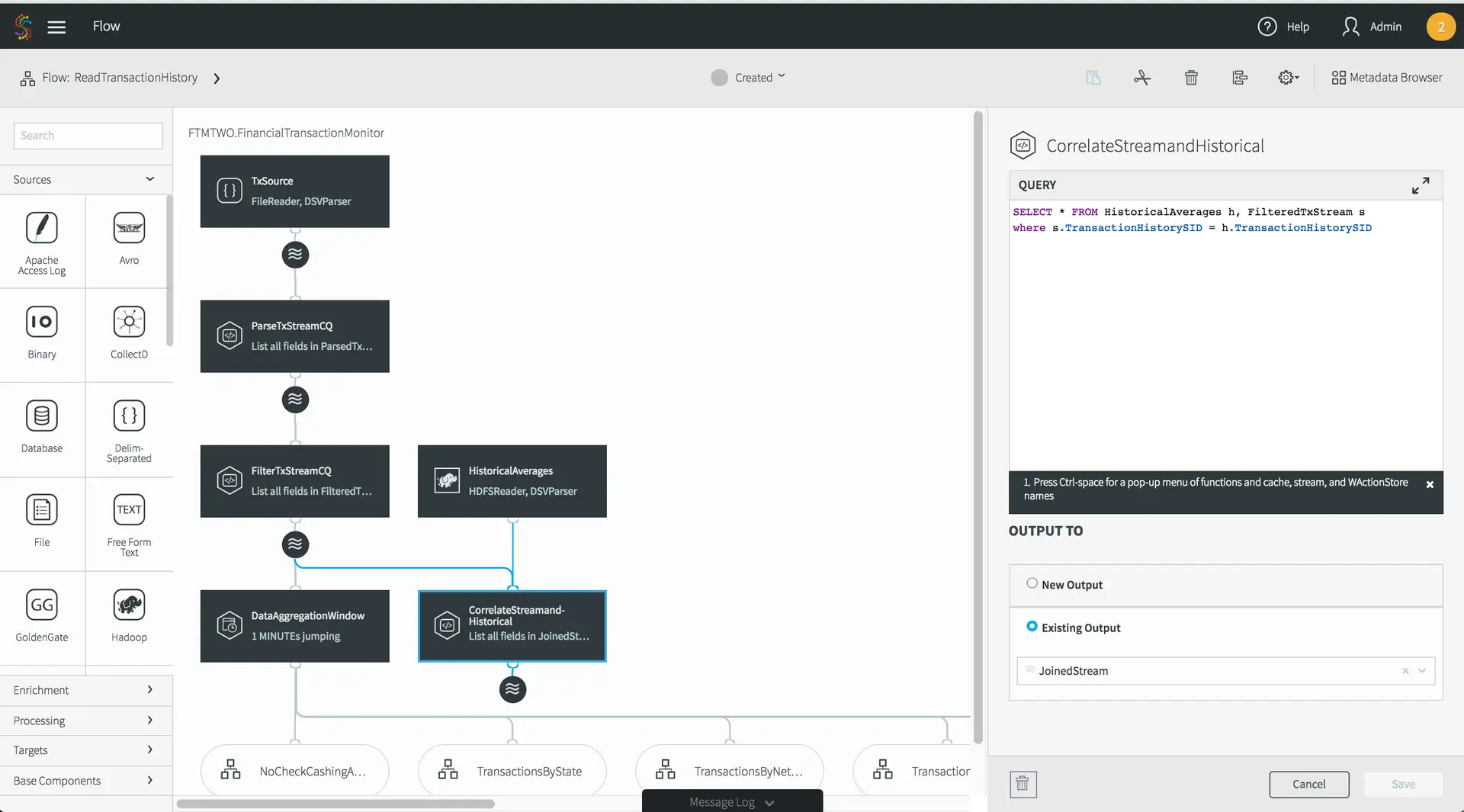

Streaming data integration is not only for on-premises to cloud migration. Once you have the cloud database in production, you perform ongoing integration with relevant data sources and applications across the enterprise, including in other clouds.

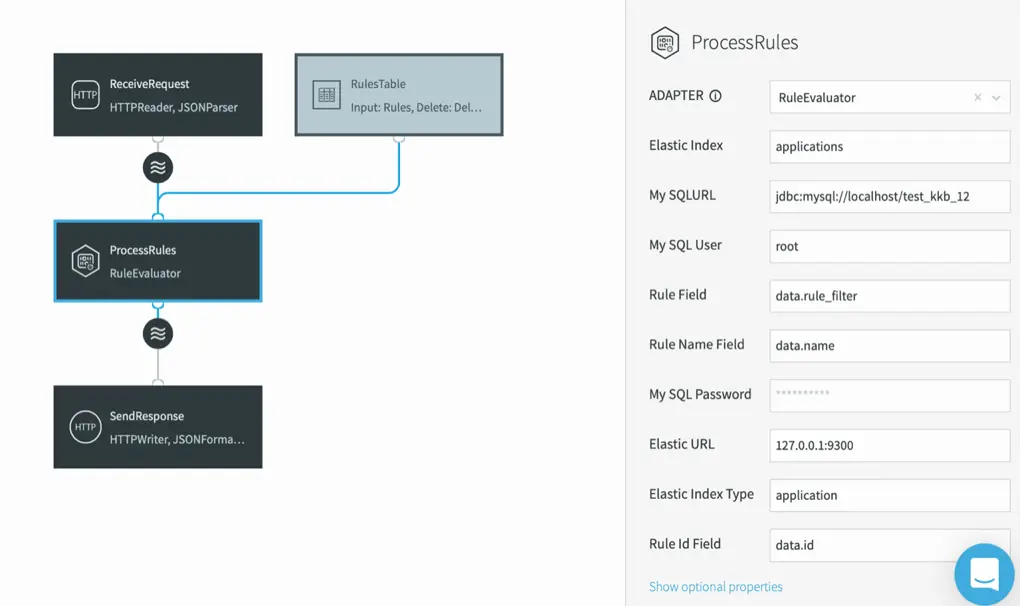

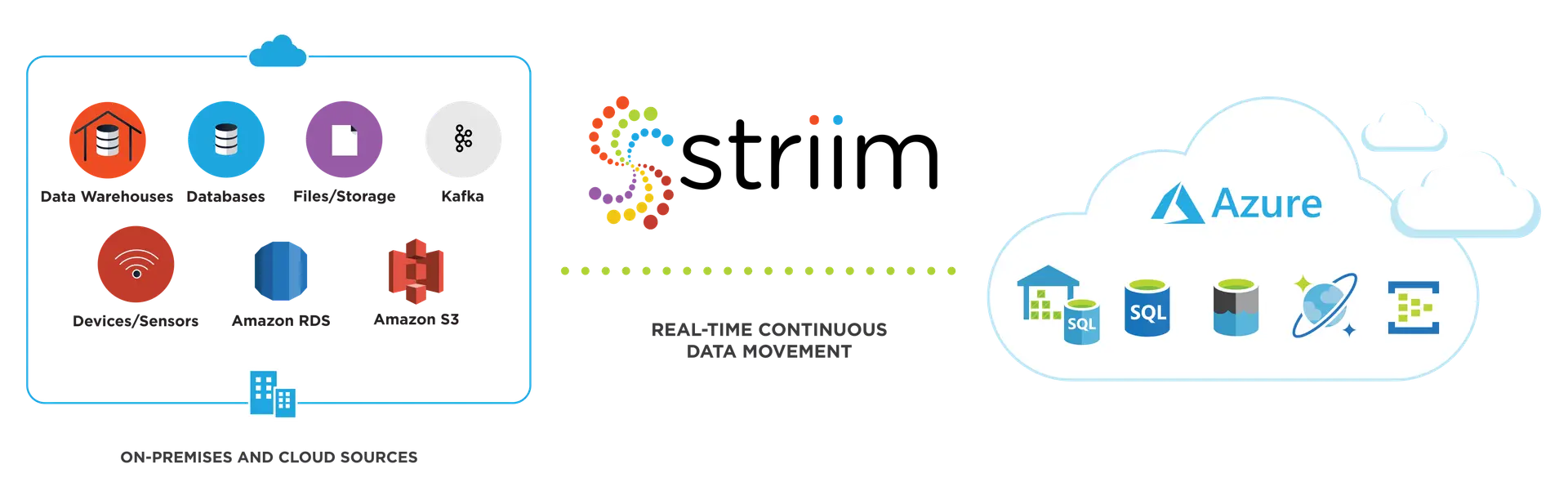

Striim is designed for continuous data integration to support your hybrid cloud architecture with stream processing capabilities, as well. When you use a single cloud integration solution for both the database migration and ongoing data integration, you minimize development efforts, shorten the learning curve, and reduce risks with simplified solution architecture.

With strong partnerships with leading cloud vendors, Striim offers proven solutions that minimize your risks during data migration and ongoing integration. To learn more about how Striim can help with your on-premises-to-cloud migration, I invite you to schedule a brief demo with a Striim technologist.

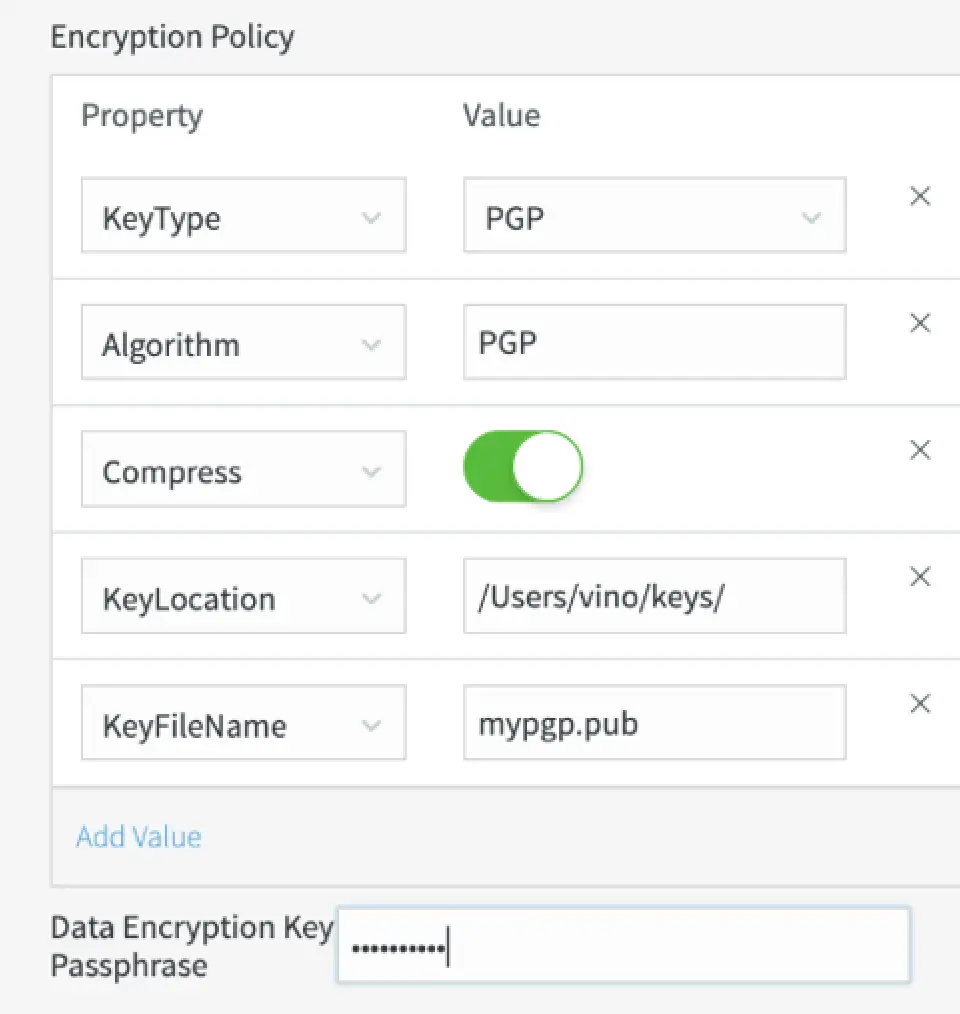

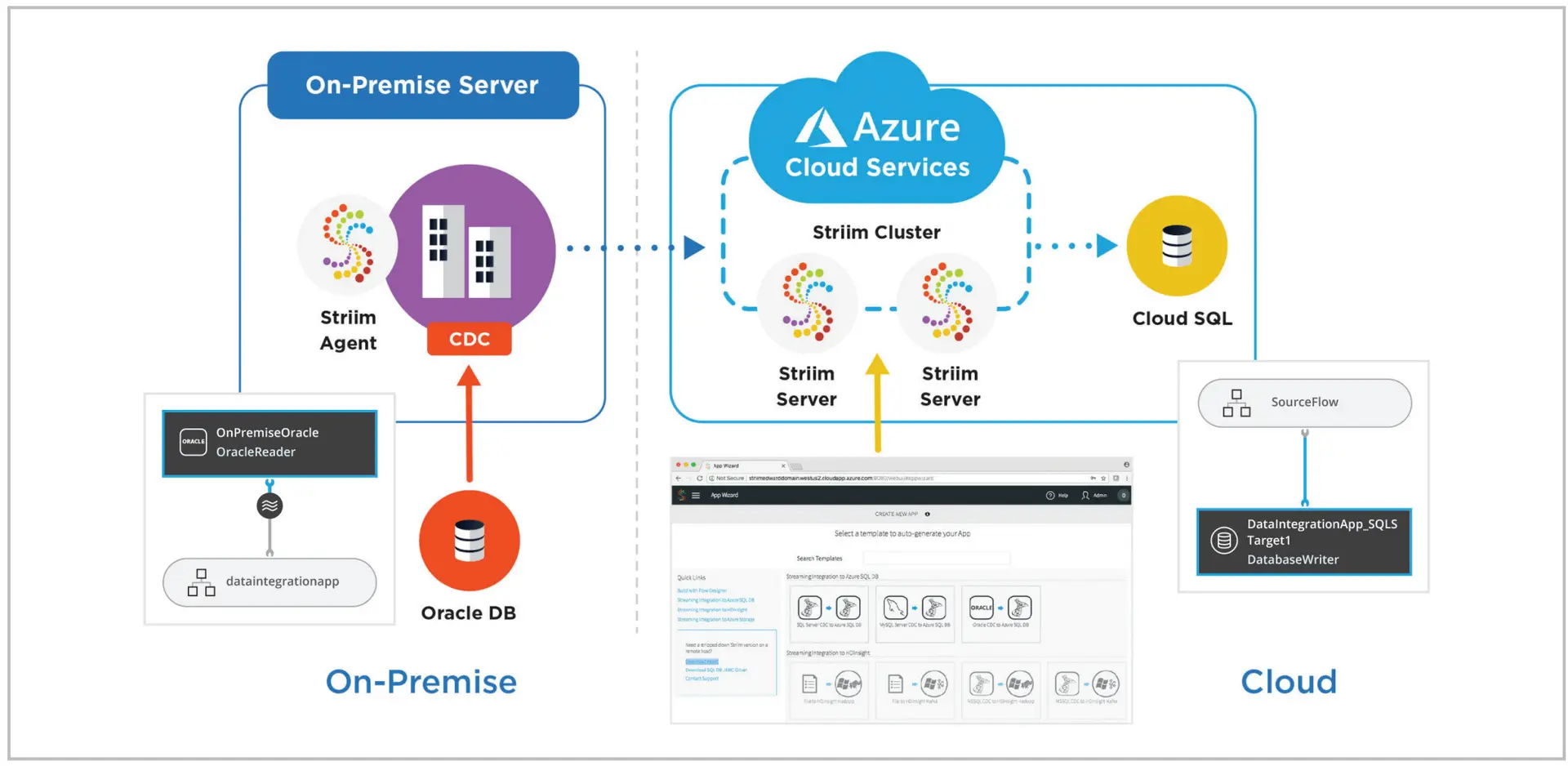

Building continuous, streaming data pipelines from on-premises databases to production cloud databases for critical workloads requires a secure, scalable, and reliable integration solution. Especially if you have enterprise database sources that cannot tolerate performance degradation, traditional batch ETL will not suffice. Striim’s low-impact change data capture (CDC) feature minimizes overhead on the source systems while moving database operations (inserts, updates, and deletes) to Azure SQL DB in real time with security, reliability, and transactional integrity.

Building continuous, streaming data pipelines from on-premises databases to production cloud databases for critical workloads requires a secure, scalable, and reliable integration solution. Especially if you have enterprise database sources that cannot tolerate performance degradation, traditional batch ETL will not suffice. Striim’s low-impact change data capture (CDC) feature minimizes overhead on the source systems while moving database operations (inserts, updates, and deletes) to Azure SQL DB in real time with security, reliability, and transactional integrity.