As Cloudera increasingly invests in its Enterprise Data Cloud, the ability move data via change data capture or CDC to Cloudera has never been more important. Database CDC to Cloudera helps Cloudera users gain more operational value from their analytics solutions by loading critical database transactions in real time.

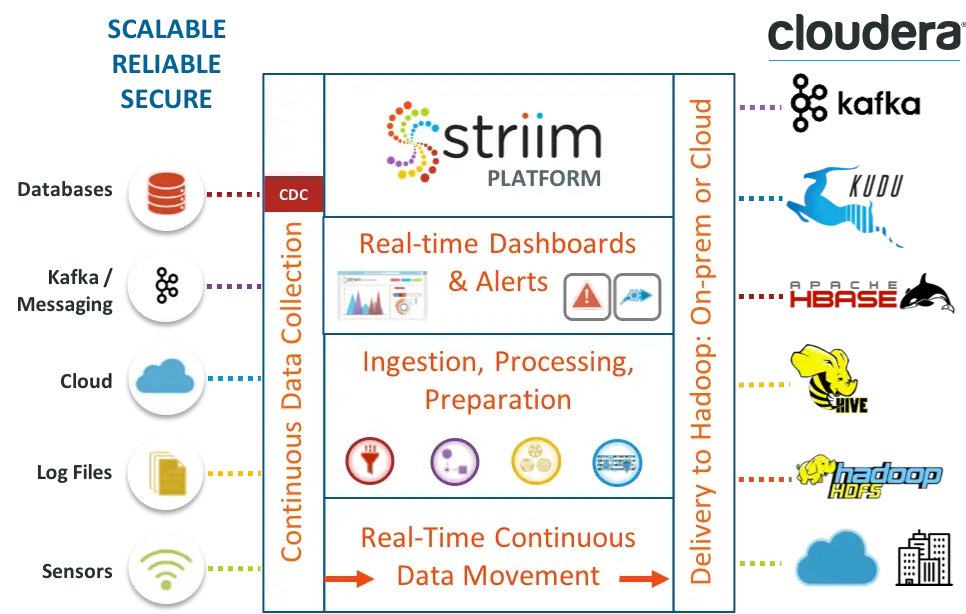

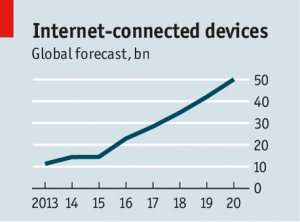

The timely ingestion of large volumes of data to Cloudera is imperative to realizing the true operational value of the platform. The explosion in the amount of data generated and the variety of data formats residing in traditional relational databases and data warehouses requires an ingestion process that is real-time and scalable.

Traditional methods or batch ETL uploads fall short in today’s business timeframes. Latency renders operational and transactional data obsolete and unable to provide Cloudera solutions with the real-time data required for operational intelligence and reporting. The negative performance impact of batch processing on transactional databases is also a major reason to move only the changed data in a continuous fashion.

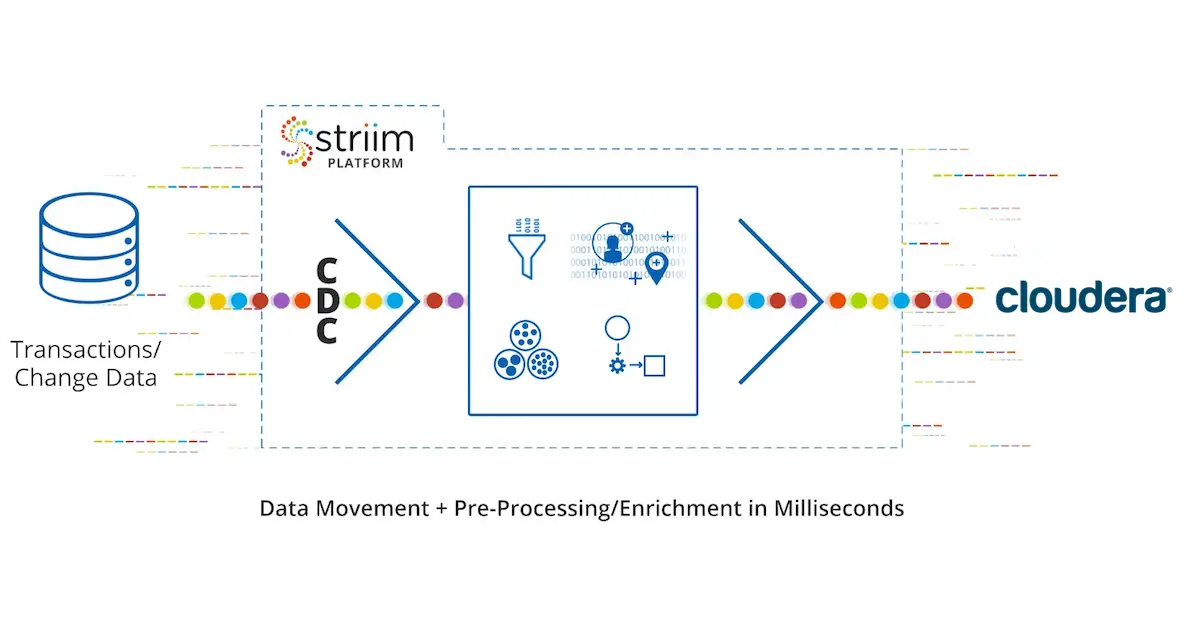

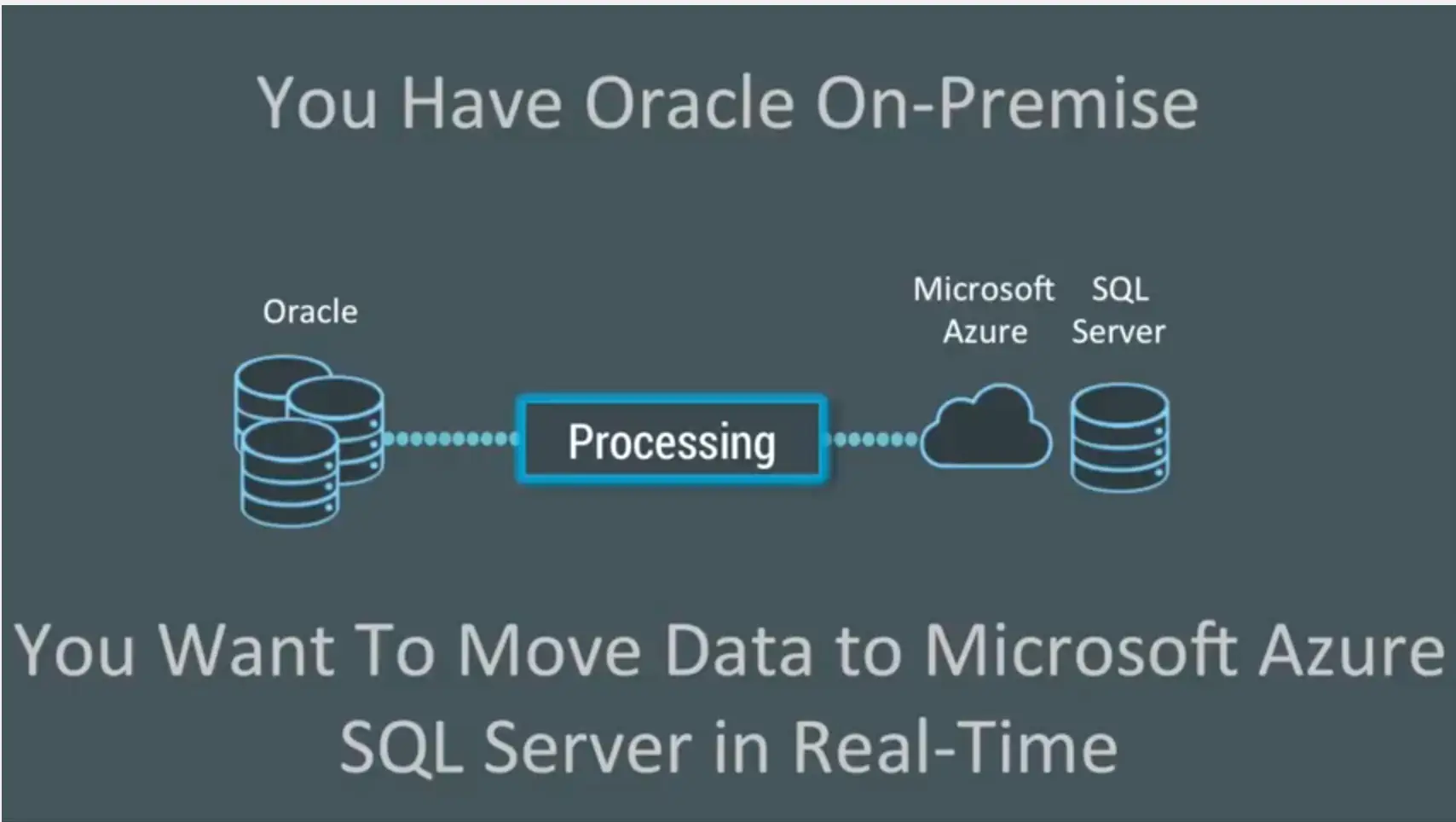

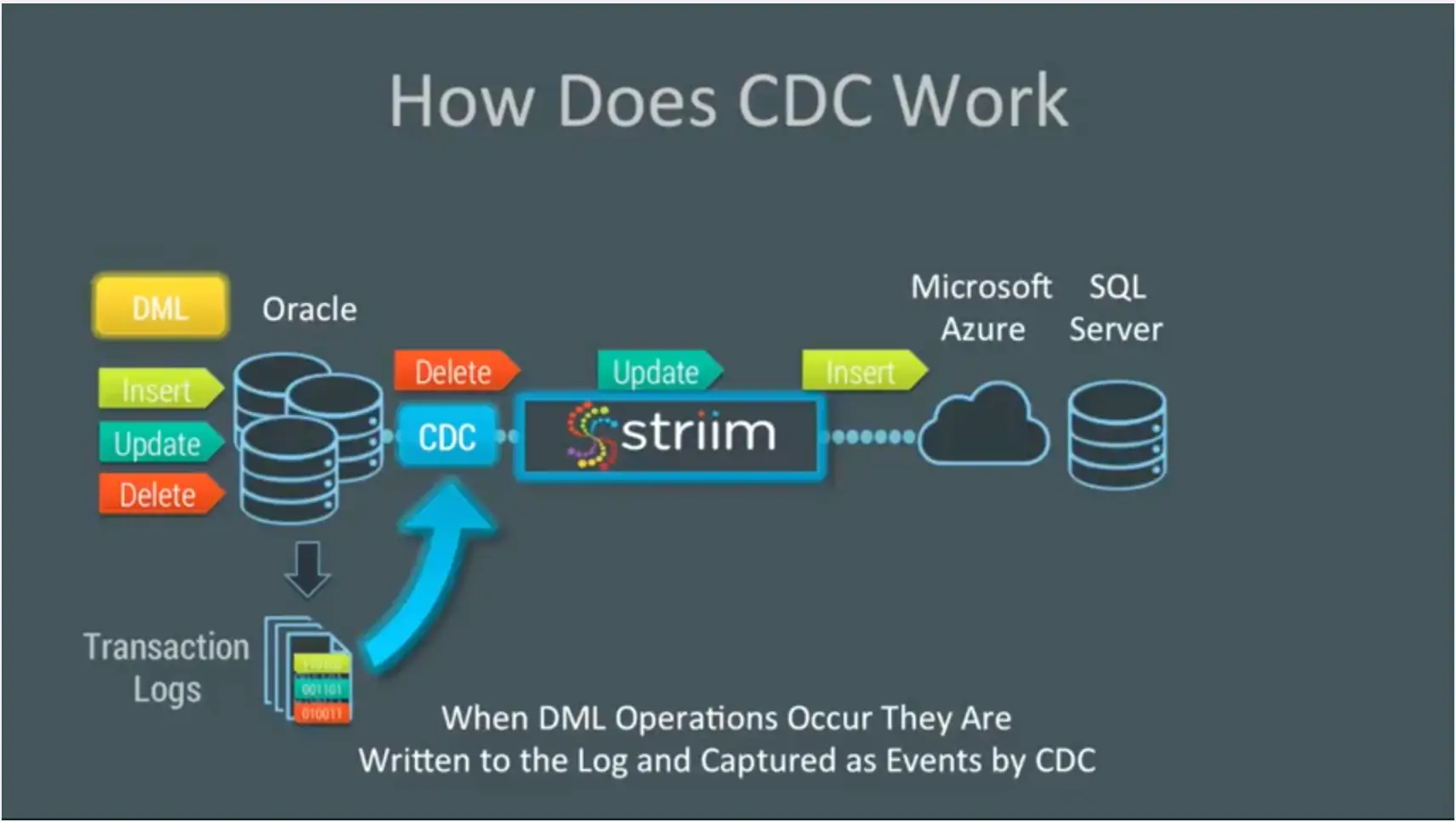

To address the concerns mentioned above, there is a solution to ingest changed data in real time from databases: CDC to Cloudera from Striim. This enterprise-grade streaming data integration solution for Cloudera supports high-volume environments and allows users to move real-time data from a wide variety of sources without impacting source systems.

By moving only change data – continuously and with essential scalability – Cloudera users can rely on the Striim platform for the delivery of data. Data can be loaded as-is, or with a variety of processing, transformations or enrichments applied, all with sub-second latency and in the right format to support specific use cases.

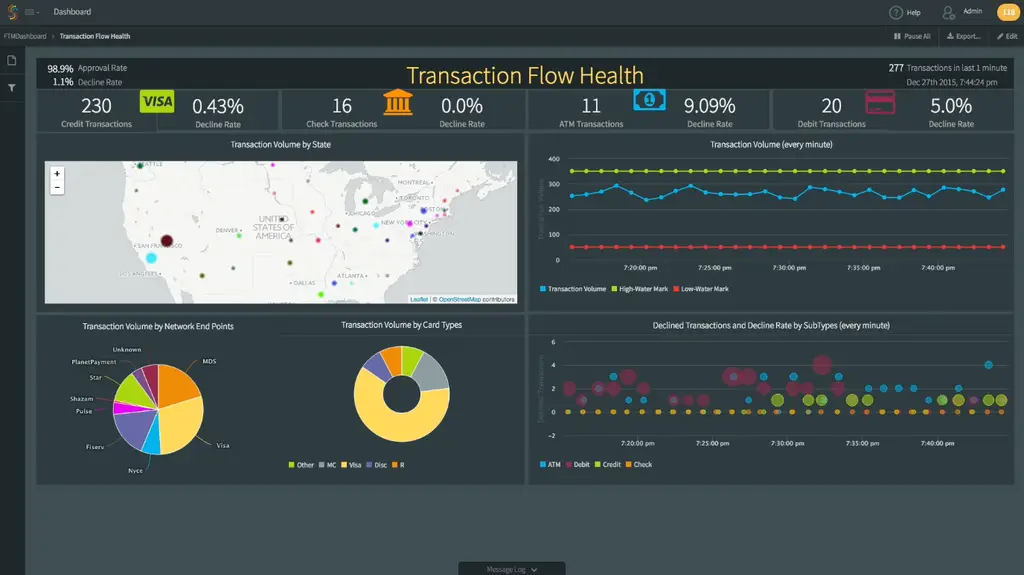

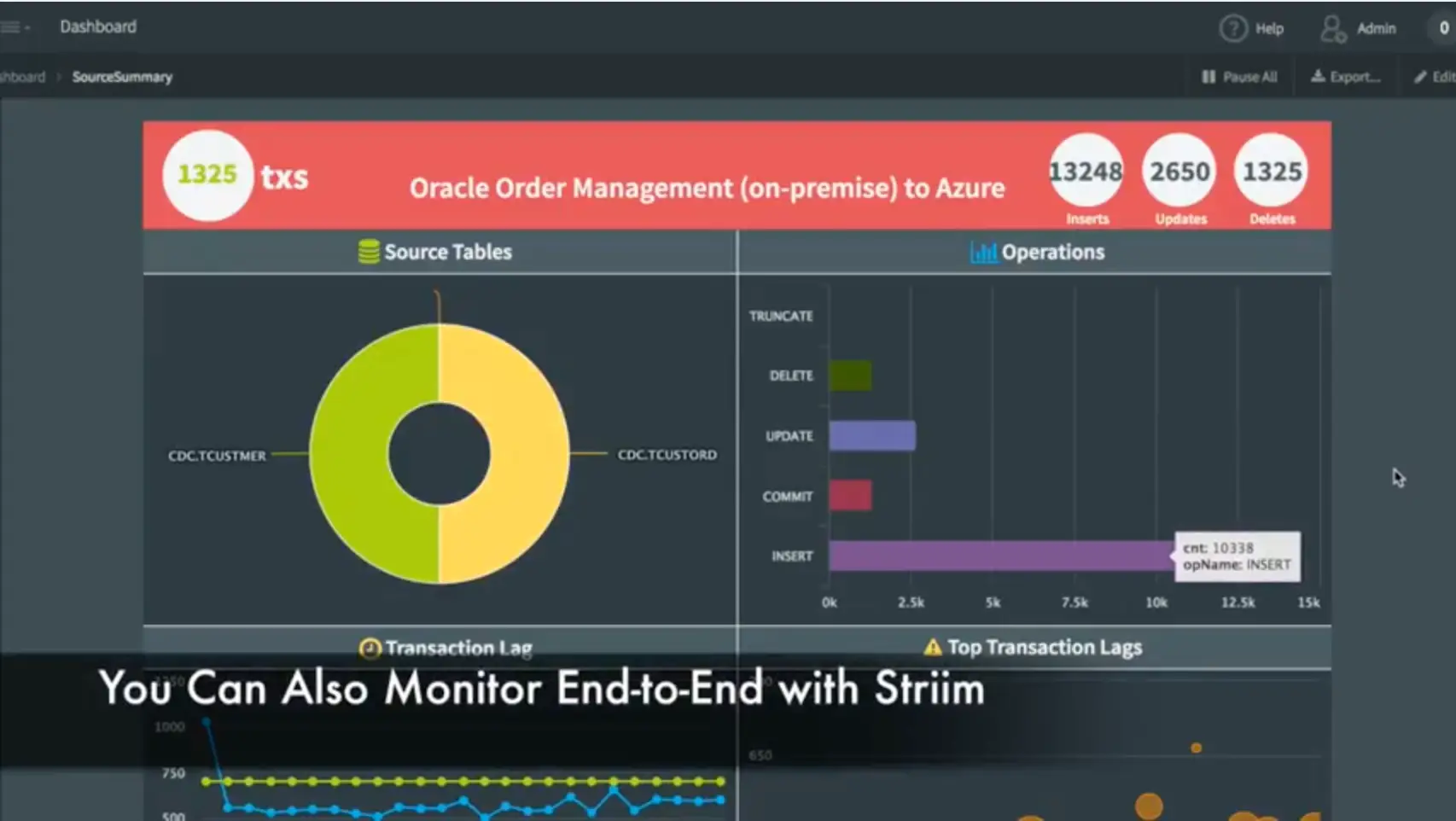

A one-time initial load with continuous change updates ensures up-to-the-second data delivery to Cloudera to support operational decision making. Striim also offers real-time pipeline monitoring with alerting, which is particularly important in the context of mission-critical solutions.

Striim currently offers low-impact, log-based CDC to Cloudera from the following data sources: Oracle, Microsoft SQL Server, MySQL, PostgreSQL, HPE NonStop SQL/MX, HPE NonStop SQL/MP, HPE NonStop Enscribe, MongoDB, and MariaDB. All of these databases can be accessed via Striim’s easy-to-use Wizards and drag-and-drop UI, speeding delivery of CDC to Cloudera solutions. In addition, Striim offers pre-built starter integration applications, such as PostgreSQL CDC to Kafka, that can be leveraged to significantly reduce development efforts of any CDC-based application.

If you’d like a brief walk-through of Striim’s CDC to Cloudera offering, please schedule a demo.

The Internet of Things Means You Now Have Less Time to ID Threats

The Internet of Things Means You Now Have Less Time to ID Threats