In many modern enterprises, data infrastructure is a patchwork from different eras. You might have core mainframes running alongside heavy SAP workloads, while a fleet of cloud-native applications handles your customer-facing services. To keep these systems in sync, Change Data Capture (CDC) has likely become a central part of your strategy.

For many, Qlik Replicate (formerly Attunity) has been a reliable anchor for this work. It handles heterogeneous environments well and provides a steady foundation for moving data across the business. But as data volumes grow and the demand for real-time AI and sub-second analytics increases, even the most robust legacy solutions can start to feel restrictive.

Whether you’re looking to optimize licensing costs, find more accessible documentation, or move toward a more cloud-native architecture, you aren’t alone. Many organizations are now exploring Qlik Replicate alternatives that offer greater flexibility and more modern streaming capabilities.

In this guide, we’ll deep-dive into the top data replication platforms to help you choose the right fit for your enterprise architecture. We’ll look at:

- Striim

- Fivetran HVR

- Oracle GoldenGate

- AWS Database Migration Service (DMS)

- Informatica PowerCenter

- Talend Data Fabric

- Hevo Data

- Airbyte

Before we break down each platform, let’s align on what modern data replication actually looks like today.

What Are Data Replication Platforms?

Data replication refers to the process of keeping multiple data systems in sync. However, in an enterprise context, it’s much more than just copying files. Modern data replication platforms are sophisticated systems that capture, move, and synchronize data across your entire stack, often in real time. Think of it as the central nervous system of your data architecture. These platforms manage high-throughput pipelines that connect diverse sources: from legacy on-premise databases to modern cloud environments like AWS, Azure, and Google Cloud. Unlike traditional batch processing, which might only update your systems every few hours, modern replication platforms use log-based Change Data Capture (CDC). This allows them to track and move only the specific data that has changed, reducing system load and ensuring that your analytics, machine learning workflows, and customer-facing apps are always working with the freshest data available.

The Strategic Benefits of Real-Time Replication

Moving data continuously is a strategic choice that can fundamentally change how your business operates. When you shift from “stale” batch data to real-time streams, you unlock several key advantages:

- Accelerated Decision-Making: When your data latency is measured in milliseconds rather than hours, your team can spot emerging trends and respond to operational issues as they happen.

- Operational Excellence Through Automation: Manual batch workflows are prone to failure and require constant oversight. Modern platforms automate the data movement process, including schema evolution and data quality monitoring, freeing up your engineering team for higher-value work.

- A Foundation for Real-Time AI: Generative AI and predictive models are only as good as the data feeding them. Real-time replication ensures your AI applications are informed by the most current state of your business, not yesterday’s reports.

- Total Cost of Ownership (TCO) Optimization: Scaling traditional batch systems often requires massive, expensive compute resources. Modern, cloud-native replication platforms are built to scale elastically with your data volumes, often resulting in a much lower TCO.

Now that we’ve defined the landscape, let’s look at the leading solutions on the market, starting with the original platform we’re comparing against.

Qlik Replicate: The Incumbent

Qlik Replicate is a well-established name in the data integration space. Known for its ability to handle “big iron” sources like mainframes and complex SAP environments, it has long been a go-to solution for organizations needing to ingest data into data warehouses and lakes with minimal manual coding.

Key Capabilities

- Log-Based CDC: Qlik Replicate specializes in non-invasive change data capture, tracking updates in the source logs to avoid putting unnecessary pressure on production databases.

- Broad Connectivity: It supports a wide range of sources, including RDBMS (Oracle, SQL Server, MySQL), legacy mainframes, and modern targets like Snowflake, Azure Synapse, and Databricks.

- No-Code Interface: The platform features a drag-and-drop UI that automates the generation of target schemas, which can significantly speed up the initial deployment of data pipelines.

Who is it for?

Qlik Replicate is typically a fit for large organizations that deal with highly heterogeneous environments. It performs well in scenarios involving complex SAP data integration, large-scale cloud migrations, or hybrid architectures where data needs to flow seamlessly between on-premise systems and the cloud.

The Trade-offs

While powerful, Qlik Replicate isn’t without its challenges.

- Cost: It is positioned as a premium enterprise solution. Licensing costs can be substantial, especially as your data volume and source count increase.

- Complexity: Despite the no-code interface, the initial configuration and performance tuning often require deep technical expertise.

- Documentation Gaps: Users frequently report that the documentation can be shallow, making it difficult to troubleshoot advanced edge cases without engaging expensive professional services.

For a more detailed breakdown, you can see how Striim compares directly with Qlik Replicate. For many organizations, these friction points—combined with a growing need for sub-second streaming rather than just replication—are what drive the search for an alternative.

Top 8 Alternatives to Qlik Replicate

The following platforms offer different approaches to data replication, ranging from developer-focused open-source solutions to fully managed, real-time streaming platforms.

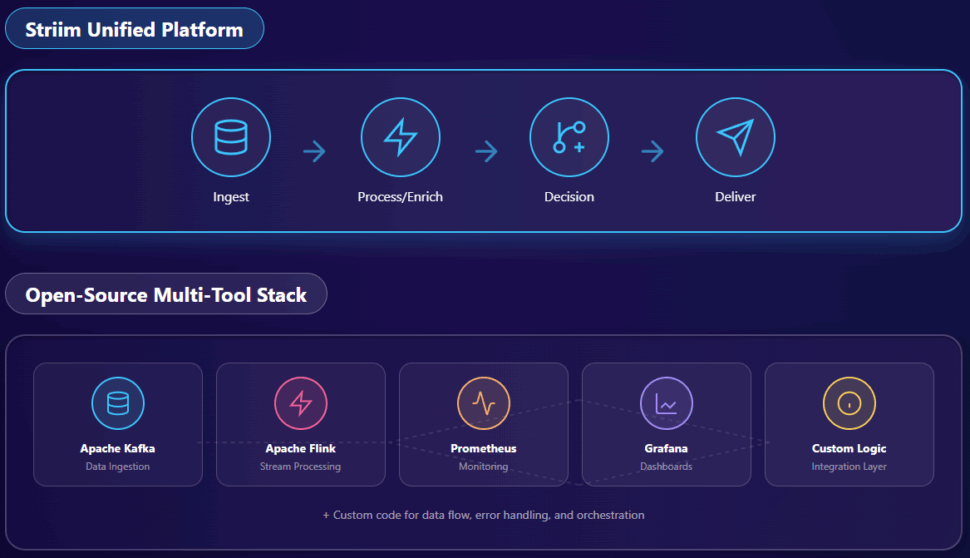

1. Striim: Real-Time Data Integration and Intelligence

Striim is the world’s leading Unified Integration and Intelligence Platform. Unlike many replication tools that focus solely on moving data from point A to point B, Striim is architected for the era of real-time AI. It allows enterprises to not only replicate data but also process, enrich, and analyze it while it’s still in motion.

Key Capabilities

- Sub-Second Log-Based CDC: Striim captures changes from production databases (Oracle, SQL Server, PostgreSQL, MySQL, etc.) as they happen, ensuring your downstream systems are updated within milliseconds.

- In-Flight Processing and Transformation: With a built-in SQL-based engine, you can filter, aggregate, and enrich data streams before they reach their destination. This is critical for data quality and for preparing data for AI models.

- Unified Intelligence: Striim doesn’t just move data; it helps you understand it. Features like Striim Copilot bring natural language interaction to your infrastructure, making it easier for practitioners to build and manage complex pipelines.

- Cloud-Native and Hybrid Deployment: Whether you’re running on-premise, in a private cloud, or across multiple public clouds (AWS, Google Cloud, Azure), Striim provides a consistent, high-performance experience.

Best For

Striim is the ideal choice for enterprises that cannot afford “stale” data. If you are building event-driven architectures, real-time fraud detection systems, or AI-powered customer experiences that require the most current information, Striim is designed for your needs. It’s particularly effective for companies moving away from the “data mess” of legacy batch processing toward a more agile, real-time strategy.

Pros

- Unmatched Latency: Designed from the ground up for sub-second performance.

- Intelligently Simple: Provides a powerful yet manageable interface that demystifies complex data flows.

- Radically Unified: Breaks down data silos by connecting legacy systems directly to modern analytics and AI platforms.

- Enterprise-Grade Support: A responsive, knowledgeable team that understands the pressures of mission-critical workloads.

Considerations

- Learning Advanced Features: While the basic setup is intuitive, mastering complex in-flight SQL transformations and real-time analytics requires a dedicated effort from your data engineering team.

- Enterprise Focus: As a high-performance solution, Striim is primarily built for enterprise-scale workloads rather than small-scale, simple migrations.

2. Fivetran HVR: High-Volume Enterprise Replication

Fivetran HVR (High Volume Replication) is a heavy-duty replication solution that Fivetran acquired to address complex, enterprise-level data movement. It is often seen as a direct alternative to Qlik Replicate due to its focus on log-based CDC and its ability to handle massive data volumes across heterogeneous environments.

Key Capabilities

- Distributed Architecture: HVR uses a unique “hub and spoke” architecture that places light-weight agents close to the data source, optimizing performance and security for hybrid cloud environments.

- Broad Database Support: It handles most major enterprise databases (Oracle, SAP, SQL Server) and specializes in high-speed ingestion into modern cloud data warehouses like Snowflake and BigQuery.

- Built-in Validation: The platform includes a robust “Compare” feature that continuously verifies that the source and target remain in perfect sync.

Pros

- Proven Performance: Replicates large datasets with high throughput and low latency.

- Security-Focused: Highly certified (SOC 2, GDPR, HIPAA) with encrypted, secure data transfers.

- Simplified Management: Since the Fivetran acquisition, HVR has benefited from a more modern, centralized dashboard for monitoring.

Cons

- Cost at Scale: Usage-based pricing (Monthly Active Rows) can become difficult to predict and expensive as data volumes surge.

- Complex Setup: Despite the newer dashboard, configuring the underlying distributed agents still requires significant technical expertise compared to SaaS-only tools.

3. Oracle GoldenGate: The Technical Powerhouse

Oracle GoldenGate is one of the most established names in the industry. It is a comprehensive suite designed for mission-critical, high-availability environments. If you are already deeply embedded in the Oracle ecosystem, GoldenGate is often the default choice for real-time data movement.

Key Capabilities

- Multi-Directional Replication: Supports unidirectional, bidirectional, and even peer-to-peer replication, making it a favorite for disaster recovery and active-active database configurations.

- OCI Integration: The platform is increasingly moving toward a fully managed, cloud-native experience through Oracle Cloud Infrastructure (OCI).

- Deep Oracle Optimization: Provides the most robust support for Oracle databases, including support for complex data types and specialized features.

Pros

- Unrivaled Reliability: Known for stability in the most demanding production environments.

- Extensive Flexibility: Can be configured to handle almost any replication topology imaginable.

- Rich Feature Set: Includes advanced tools for data verification and conflict resolution in multi-master setups.

Cons

- Prohibitive Cost: The licensing model is notoriously complex and expensive, often requiring a substantial upfront investment.

- Steep Learning Curve: Maintaining GoldenGate usually requires specialized, certified experts; it is not a “set it and forget it” solution.

- Resource Intensive: The platform can be heavy on system resources, requiring careful performance tuning to avoid impacting source databases.

4. AWS Database Migration Service (DMS)

For organizations already operating within the Amazon ecosystem, AWS DMS is a highly accessible entry point for database replication. While it was originally conceived as a one-time migration tool, it has evolved into a persistent replication service for many cloud-native teams.

Key Capabilities

- Zero Downtime Migration: AWS DMS keeps your source database operational during the migration process, using CDC to replicate ongoing changes until the final cutover.

- Homogeneous and Heterogeneous Support: It works well for migrating like-for-like databases (e.g., MySQL to Aurora) or converting between different engines (e.g., Oracle to PostgreSQL) using the AWS Schema Conversion Tool (SCT).

- Serverless Scaling: The serverless option automatically provisions and scales resources based on demand, which is excellent for handling variable migration workloads.

Pros

- AWS Integration: Deeply integrated with the rest of the AWS console, making it easy for existing AWS users to spin up.

- Cost-Effective for Migration: Pricing is straightforward and generally lower than premium enterprise solutions for one-off projects.

- Managed Service: Reduces the operational overhead of managing your own replication infrastructure.

Cons

- Latency for Persistent Sync: While it handles migrations well, it may struggle with sub-second latency for complex, ongoing replication at enterprise scale.

- Limited Transformation: Transformation capabilities are basic compared to specialized streaming platforms; you often need to perform heavy lifting downstream.

5. Informatica PowerCenter: The Enterprise Veteran

Informatica PowerCenter is a legacy powerhouse in the ETL world. It is a comprehensive platform that focuses on high-volume batch processing and complex data transformations, making it a staple in the data warehouses of Global 2000 companies.

Key Capabilities

- Robust Transformation Engine: PowerCenter is unmatched when it comes to complex, multi-step ETL logic and data cleansing at scale.

- Metadata Management: It features a centralized repository for metadata, providing excellent lineage and governance—critical for highly regulated industries.

- PowerExchange for CDC: Through its PowerExchange modules, Informatica can handle log-based CDC from mainframes and relational databases.

Pros

- Highly Mature: Decades of development have made this one of the most stable and feature-rich ETL solutions available.

- Enterprise Connectivity: There is almost no source or target that Informatica cannot connect to, including deep legacy systems.

- Scalability: Built to handle the massive data volumes of the world’s largest enterprises.

Cons

- Heavyweight Architecture: It often requires significant on-premise infrastructure and specialized consultants to maintain.

- Not Real-Time Native: While it has CDC capabilities, PowerCenter is fundamentally built for batch. Moving toward sub-second streaming often requires a shift to Informatica’s newer cloud-native offerings (IDMC).

- Steep Cost of Ownership: Between licensing, maintenance, and specialized labor, it remains one of the most expensive options on the market.

6. Talend Data Fabric: Unified Data Governance

Talend Data Fabric is a comprehensive platform that combines data integration, quality, and governance into a single environment. Recently acquired by Qlik, Talend offers a more holistic approach to data management that appeals to organizations needing to balance integration with strict compliance.

Key Capabilities

- Unified Trust Score: Automatically scans and profiles datasets to assign a “Trust Score,” helping users understand the quality and reliability of their data at a glance.

- Extensive Connector Library: Offers hundreds of pre-built connectors for cloud platforms, SaaS apps, and legacy databases.

- Self-Service Preparation: Includes tools that empower business users to clean and prepare data without constant engineering support.

Pros

- Strong Governance: Excellent tools for data lineage, metadata management, and compliance (PII identification).

- Flexible Deployment: Supports on-premise, cloud, and hybrid environments with a focus on Apache Spark for high-volume processing.

- User-Friendly for Non-Engineers: No-code options make it more accessible to analysts and business units.

Cons

- Complexity for Simple Tasks: The platform can feel “over-engineered” for teams that only need basic replication.

- Pricing Opacity: Like Qlik, Talend’s pricing is quote-based and can become complex across its various tiers and metrics.

If you’re looking for a wider overview of this specific space, we’ve put together a guide to the top 9 data governance tools for 2025.

7. Hevo Data: No-Code Simplicity for Mid-Market

Hevo Data is a relatively newer entrant that focuses on extreme ease of use. It is a fully managed, no-code platform designed for teams that want to set up data pipelines in minutes rather than weeks.

Key Capabilities

- Automated Schema Mapping: Automatically detects source changes and adapts the target schema in real time, reducing pipeline maintenance.

- Real-Time CDC: Uses log-based capture to provide near real-time synchronization with minimal impact on the source.

- 150+ Pre-built Connectors: Strong focus on popular SaaS applications and cloud data warehouses.

Pros

- Fast Time-to-Value: Extremely simple UI allows for very quick setup without engineering heavy lifting.

- Responsive Support: Highly rated for its customer service and clear documentation.

- Transparent Pricing: Offers a free tier and predictable, volume-based plans for growing teams.

Cons

- Limited for Complex Logic: While it has built-in transformations, it may feel restrictive for advanced engineering teams needing deep, custom SQL logic.

- Mid-Market Focus: While capable, it may lack some of the deep “big iron” connectivity (like specialized mainframe support) required by legacy enterprises.

8. Airbyte: The Open-Source Disruptor

Airbyte is an open-source data integration engine that has rapidly gained popularity for its massive connector library and developer-friendly approach. It offers a unique alternative for organizations that want to avoid vendor lock-in.

Key Capabilities

- 600+ Connectors: The largest connector library in the industry, driven by an active open-source community.

- Connector Development Kit (CDK): Allows technical teams to build and maintain custom connectors using any programming language (Python is a favorite).

- Flexible Deployment: Can be self-hosted for free (Open Source), managed in the cloud (Airbyte Cloud), or deployed as an enterprise-grade solution.

Pros

- Developer Choice: Excellent for teams that prefer configuration-as-code and want full control over their infrastructure.

- Avoids Lock-in: The open-source core ensures you aren’t tied to a single vendor’s proprietary technology.

- Active Community: Rapidly evolving with constant updates and new features being added by contributors.

Cons

- Management Overhead: Self-hosting requires engineering resources for maintenance, monitoring, and scaling.

- Variable Connector Stability: Because many connectors are community-contributed, stability can vary between “certified” and “alpha/beta” connectors.

Choosing the Right Qlik Replicate Alternative

Selecting the right platform depends entirely on your specific architectural needs and where your organization is on its data journey.

- If sub-second latency and real-time AI are your priority: Striim is the clear choice. Its ability to process and enrich data in-flight makes it the most powerful option for modern, event-driven enterprises. For more on this, check out our guide on key considerations for selecting a real-time analytics platform.

- If you need deep Oracle integration and multi-master replication: Oracle GoldenGate remains the technical standard, provided you have the budget and expertise to manage it.

- If you want a balance of enterprise power and ease of use: Fivetran HVR is a strong contender, particularly for high-volume ingestion into cloud warehouses.

- If you are a developer-centric team avoiding vendor lock-in: Airbyte offers the flexibility and community-driven scale you need.

- If you need simple, no-code pipelines for SaaS data: Hevo Data provides the fastest path to value for mid-market teams.

Frequently Asked Questions (FAQs)

1. How long does it take to migrate from Qlik Replicate to an alternative?

Migration timelines depend on the number of pipelines and the complexity of your transformations. A targeted migration of 5-10 sources can often be completed in 2-4 weeks. Large-scale enterprise migrations involving hundreds of pipelines typically take 3-6 months.

2. Can these alternatives handle the same volume as Qlik Replicate?

Yes. Platforms like Striim, Fivetran HVR, and GoldenGate are specifically engineered for mission-critical, high-volume enterprise workloads, often processing millions of events per second with high reliability.

3. Do I need to redo all my configurations manually?

Most platforms do not have a “one-click” import for Qlik configurations. However, many modern alternatives offer configuration-as-code or automated schema mapping, which can make the recreation process much faster than the original manual setup in Qlik’s GUI.

4. Which alternative is best for real-time AI?

Striim is uniquely architected for real-time AI. Unlike tools that only move data, Striim allows you to filter, transform, and enrich data in motion, ensuring your AI models are fed with clean, high-context, sub-second data.

5. Are there free alternatives available?

Airbyte offers a robust open-source version that is free to self-host. Striim also offers a free Developer tier for prototypes and small-scale experimentation, as does Hevo with its basic free plan.

While this native system offers a basic form of CDC, it was not designed for the high-volume, low-latency demands of modern cloud architectures. The SQL Server Agent jobs and the constant writing to change tables introduce performance overhead (added I/O and CPU) that can directly impact your production database, especially at scale.

While this native system offers a basic form of CDC, it was not designed for the high-volume, low-latency demands of modern cloud architectures. The SQL Server Agent jobs and the constant writing to change tables introduce performance overhead (added I/O and CPU) that can directly impact your production database, especially at scale.